深度学习模型在C++平台的部署

代码语言:javascript。代码语言:javascript。

·

Python训练和导出

代码语言:javascript

AI代码解释

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms

from torch.functional import F

# 定义简单的CNN模型

class SimpleCNN(nn.Module):

def __init__(self):

super(SimpleCNN, self).__init__()

self.conv1 = nn.Conv2d(1, 16, kernel_size=3, stride=1, padding=1)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(16, 32, kernel_size=3, stride=1, padding=1)

self.fc1 = nn.Linear(32 * 7 * 7, 128)

self.fc2 = nn.Linear(128, 10)

def forward(self, x):

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 32 * 7 * 7)

x = F.relu(self.fc1(x))

x = self.fc2(x)

return x

# 数据预处理

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

# 加载训练数据

train_dataset = datasets.MNIST('data', train=True, download=True, transform=transform)

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=64, shuffle=True)

# 初始化模型、损失函数和优化器

model = SimpleCNN()

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=0.001)

# 训练模型

def train(model, train_loader, criterion, optimizer, epochs=5):

model.train()

for epoch in range(epochs):

running_loss = 0.0

for batch_idx, (data, target) in enumerate(train_loader):

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

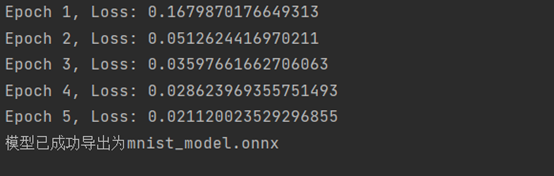

print(f'Epoch {epoch+1}, Loss: {running_loss/len(train_loader)}')

# 训练模型

train(model, train_loader, criterion, optimizer)

# 导出为ONNX格式

dummy_input = torch.randn(1, 1, 28, 28)

torch.onnx.export(

model,

dummy_input,

"mnist_model.onnx",

export_params=True,

opset_version=11,

do_constant_folding=True,

input_names=['input'],

output_names=['output'],

dynamic_axes={'input': {0: 'batch_size'}, 'output': {0: 'batch_size'}}

)

print("模型已成功导出为mnist_model.onnx")

2. C++ 部署和推理

代码语言:javascript

AI代码解释

#include <iostream>

#include <vector>

#include <opencv2/opencv.hpp>

#include <onnxruntime_cxx_api.h>

int main() {

// 初始化环境

Ort::Env env(ORT_LOGGING_LEVEL_WARNING, "MNIST");

Ort::SessionOptions session_options;

session_options.SetIntraOpNumThreads(1);

session_options.SetGraphOptimizationLevel(GraphOptimizationLevel::ORT_ENABLE_ALL);

// 加载模型

std::wstring model_path = L"mnist_model.onnx";

Ort::Session session(env, model_path.c_str(), session_options);

// 准备输入

std::vector<int64_t> input_shape = { 1, 1, 28, 28 };

size_t input_tensor_size = 28 * 28;

std::vector<float> input_tensor_values(input_tensor_size);

// 读取测试图片

cv::Mat test_image = cv::imread("test.jpg", cv::IMREAD_GRAYSCALE);

// 将Mat数据复制到vector中

for (int i = 0; i < test_image.rows; ++i) {

for (int j = 0; j < test_image.cols; ++j) {

input_tensor_values[i * test_image.cols + j] = static_cast<float>(test_image.at<uchar>(i, j)); // 注意:uchar是unsigned char的缩写,表示无符号字符,通常用于存储灰度值

}

}

// 创建输入张量

auto memory_info = Ort::MemoryInfo::CreateCpu(OrtArenaAllocator, OrtMemTypeDefault);

Ort::Value input_tensor = Ort::Value::CreateTensor<float>(

memory_info, input_tensor_values.data(), input_tensor_size, input_shape.data(), 4);

// 设置输入输出名称

std::vector<const char*> input_names;

std::vector<const char*> output_names;

input_names.push_back(session.GetInputNameAllocated(0, Ort::AllocatorWithDefaultOptions()).get());

output_names.push_back(session.GetOutputNameAllocated(0, Ort::AllocatorWithDefaultOptions()).get());

// 运行推理

auto output_tensors = session.Run(

Ort::RunOptions{ nullptr },

input_names.data(),

&input_tensor,

1,

output_names.data(),

1);

// 获取输出结果

float* output = output_tensors[0].GetTensorMutableData<float>();

std::vector<float> results(output, output + 10);

// 找到预测的数字

int predicted_digit = 0;

float max_probability = results[0];

for (int i = 1; i < 10; i++) {

if (results[i] > max_probability) {

max_probability = results[i];

predicted_digit = i;

}

}

std::cout << "预测结果: " << predicted_digit << std::endl;

std::cout << "置信度分布:" << std::endl;

for (int i = 0; i < 10; i++) {

std::cout << "数字 " << i << ": " << results[i] << std::endl;

}

return 0;

}测试图片: 6

程序运行:

End.

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)