YOLO深度学习(计算机视觉)一注意力模块无敌透彻(CBAM、SpatialAttention、C2PSA)

本文介绍了两种注意力机制在目标检测中的应用对比,重点讲解了CBAM模块的原理与实现。作者通过玉米雄穗检测案例,说明Transformer类注意力机制不适合微小目标检测,而轻量级的CBAM模块通过通道和空间双重注意力能有效强化小目标特征。文章详细展示了在YOLOv11中插入CBAM模块的代码实现过程,包括模块初始化、模型结构调整和训练配置优化。实验结果表明,CBAM能精准定位微小目标,同时保持较低的

提示:这里专门写得是针对适用于YOLO的注意力模块写法,对于所有人工智能框架在原理层面是没有任何的,但是代码方面仅使用于YOLO

一、【注意力】简单概念

由于我自己也是小白,非人工智能科班生临时学的,所以我也不深究其中的深层原理,大致了解一下是个什么东西就行

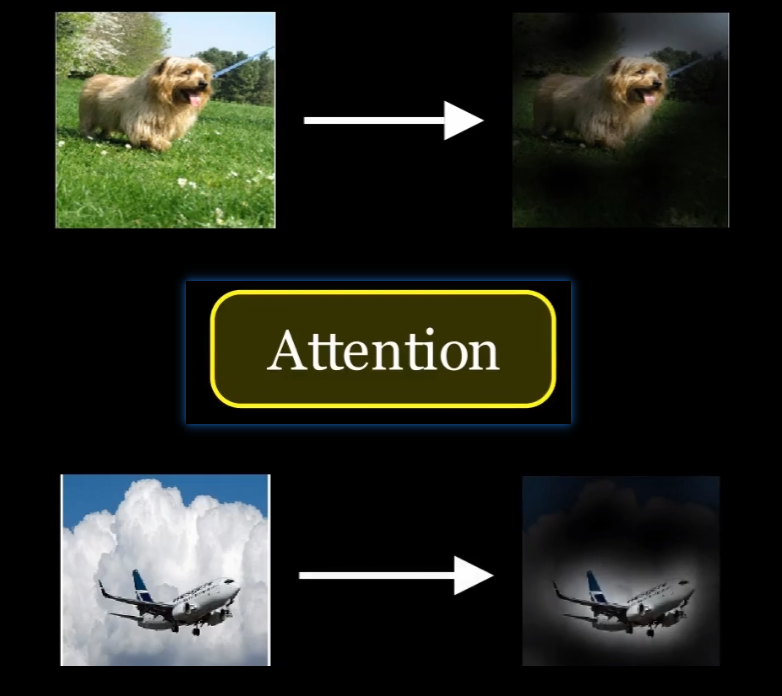

首先【注意力】顾名思义,就是让计算机专注于图像中我们关心的目标物体,而忽略周边的无关像素,而做法就是要调各个像素的参数权重,让专注的像素的参数权重高、其他的参数权重低

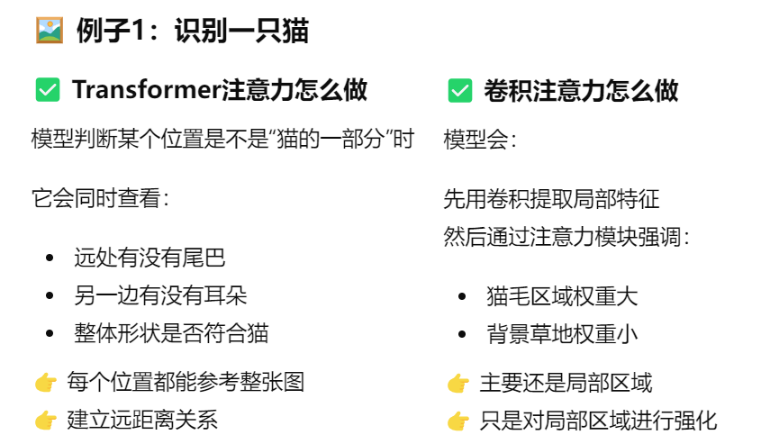

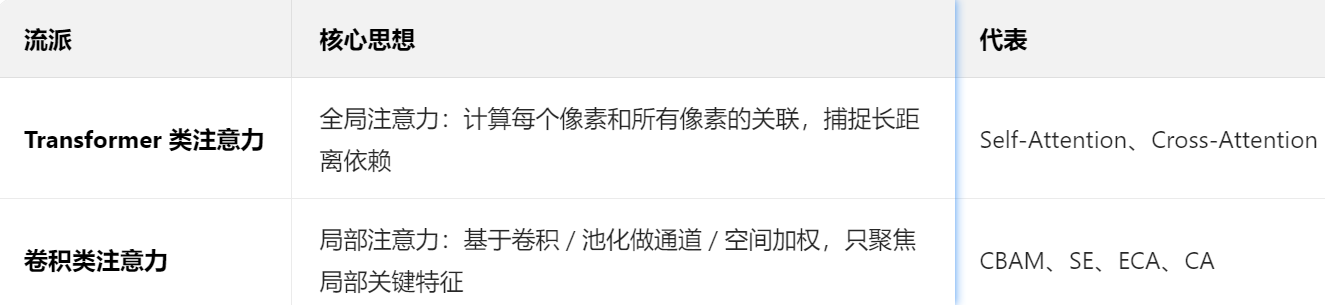

这就涉及了两大类注意力:【Transformer 类注意力机制】VS【卷积类注意力模块】

1、【Transformer 类注意力机制】

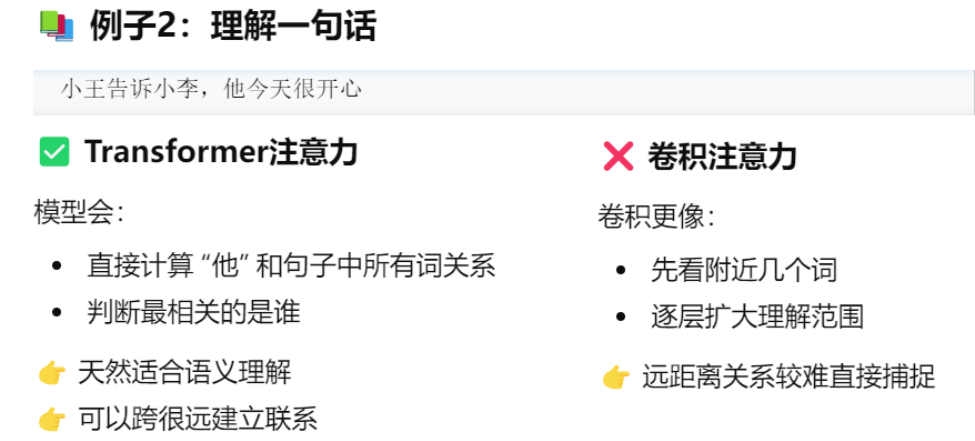

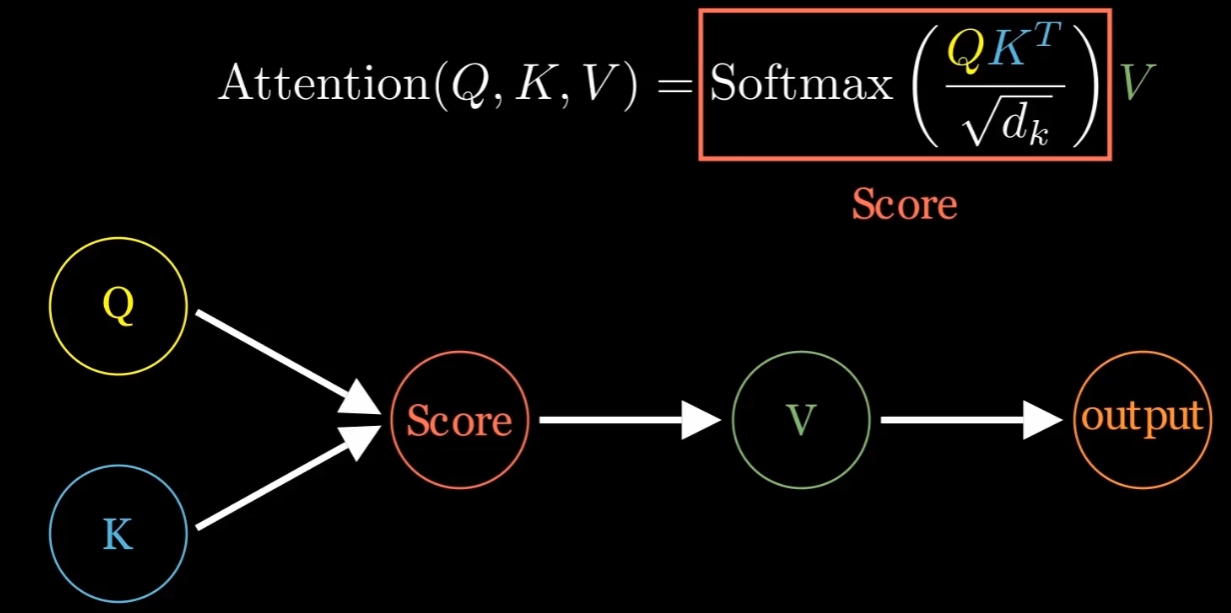

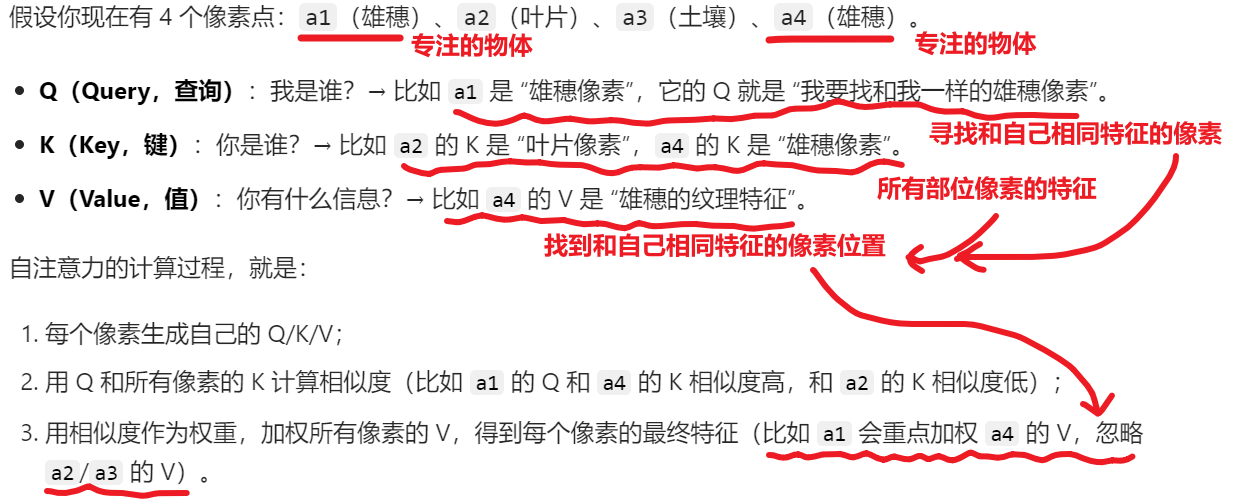

它的原理就是用下图这个公式得到结果:它的核心思想是通过对输入的每一个元素(Q、K、V)进行加权和计算,进而获得最相关的部分进行重点关注。

- 这个公式里涉及了3个参数:Q、K、V

- 大致结构如图,看一眼就够了,我只是想显得专业一点而已.....

至于我为什么要介绍这个【Transformer 类注意力机制】,是因为它跟我们YOLO目标检测要用到的注意力模块暂时无需关心,他最常用于NLP大语言模型,虽然现在计算机视觉领域也开始称王,但是作为初学者,像我这种SB很有可能会花时间去了解它,而到最后啥也没学会

这里我只是为了告诉各位【Transformer 类注意力机制】和【卷积类注意力模块】是两个不一样的东西,前者我们先不用,后者才是我们要用的。

2、【卷积类注意力模块】

卷积类注意力通常是指在卷积神经网络中通过局部感受野进行关注。与Transformer不同,卷积操作是基于局部区域的特征进行提取,因此它对局部空间的注意力更加专注,而不是全局的信息交互。卷积类注意力的计算方式更偏向于局部感知,适用于空间结构数据(如图像),而Transformer类注意力则更擅长处理长距离依赖的全局信息。当然这也决定了卷积类注意力只适用于计算机视觉,而非NLP大语言模型。

3、二者对比

- Transformer类注意力:

- 全局关注:可以通过【全局】信息建模,适合处理NLP语言的【长距离依赖】。

- 计算量大:由于需要计算所有输入元素之间的关系,计算开销相对较高。

- 灵活性强:能够处理任何类型的输入序列数据(如文本、语音等)。

- 卷积类注意力:

- 局部关注:主要聚焦于【局部】区域特征,只适用于【图像等】数据。

- 计算效率高:由于卷积操作具有局部性,计算效率比Transformer高。

- 不善处理长距离依赖:卷积结构不适合直接捕捉远距离信息,可能需要多层卷积来近似全局关系。

二、YOLO适用的卷积类注意力

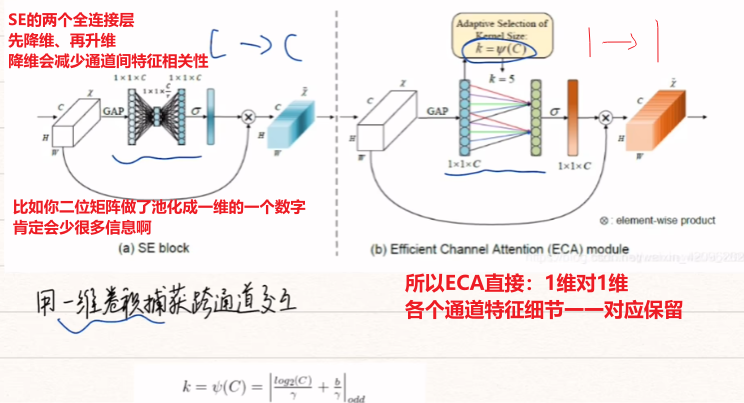

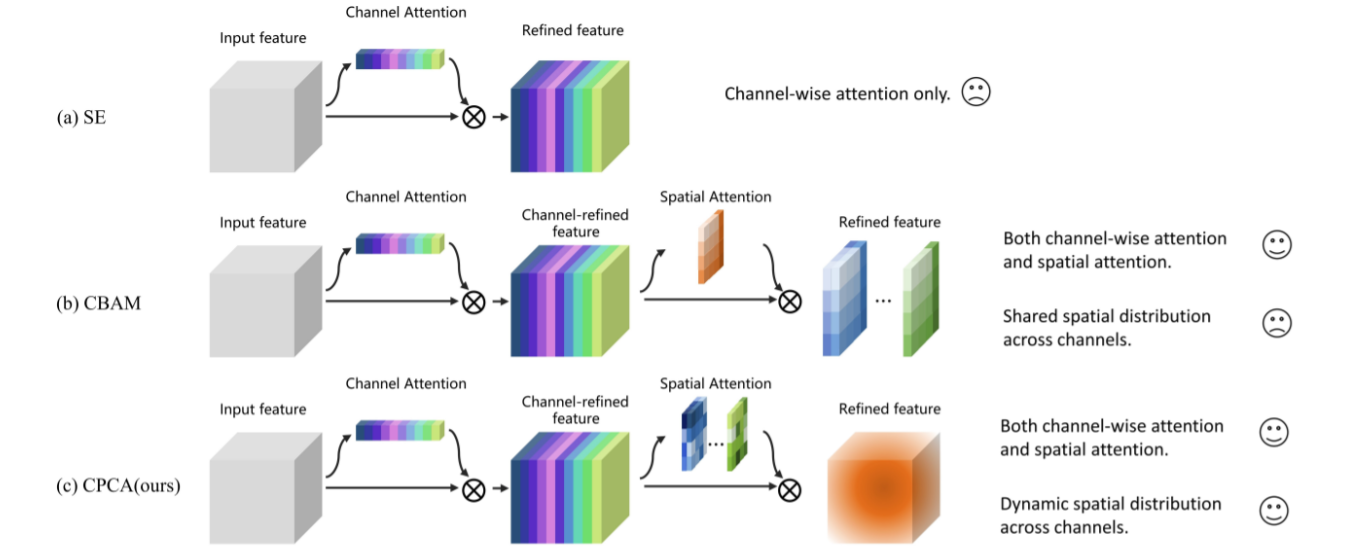

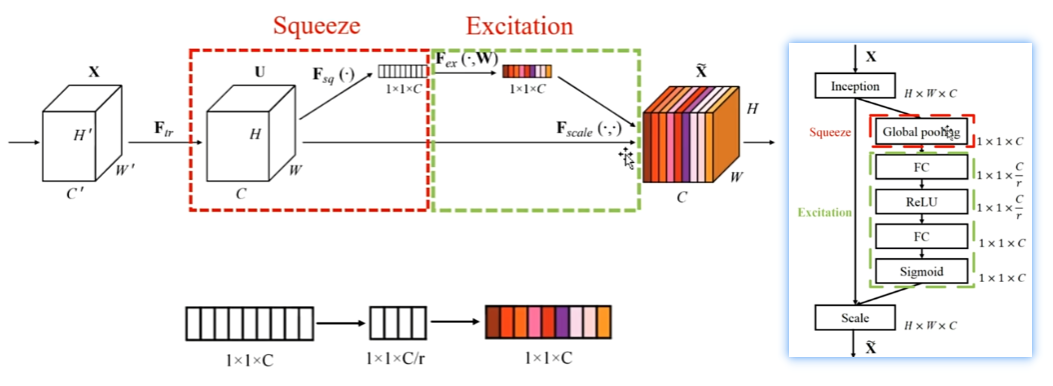

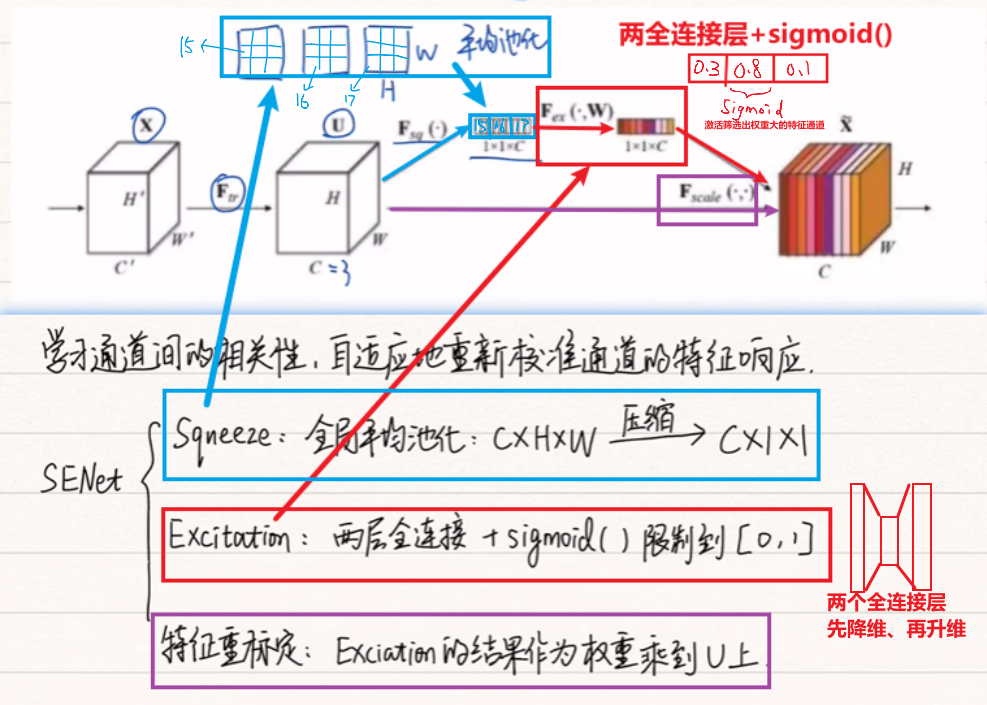

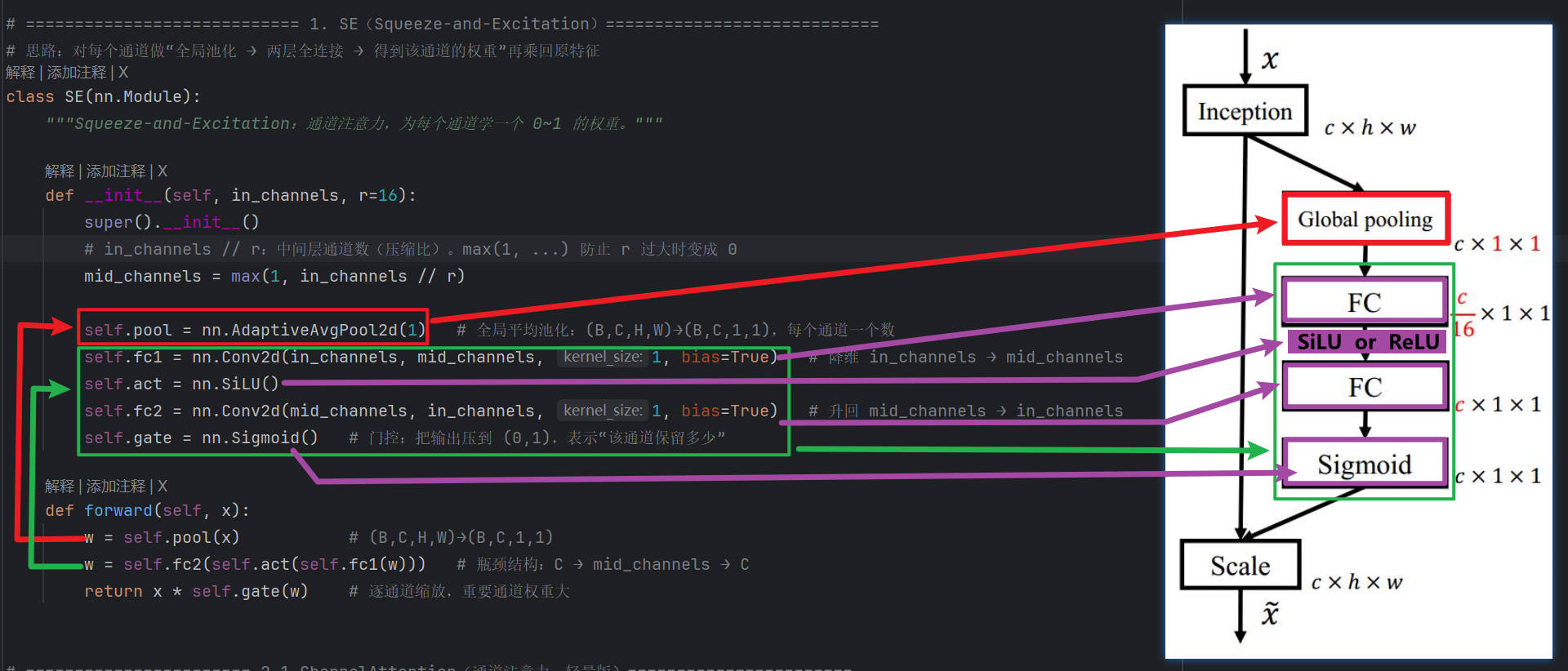

1、第一代:SEnet模块

- 结构:【平均池化压缩】+【2全连接层 + Sigmoid()函数】+【结果乘上输入权重】

- 特点:

- ✔ 只做通道注意力

- ✔ 结构极简

- ❌ 不知道空间位置

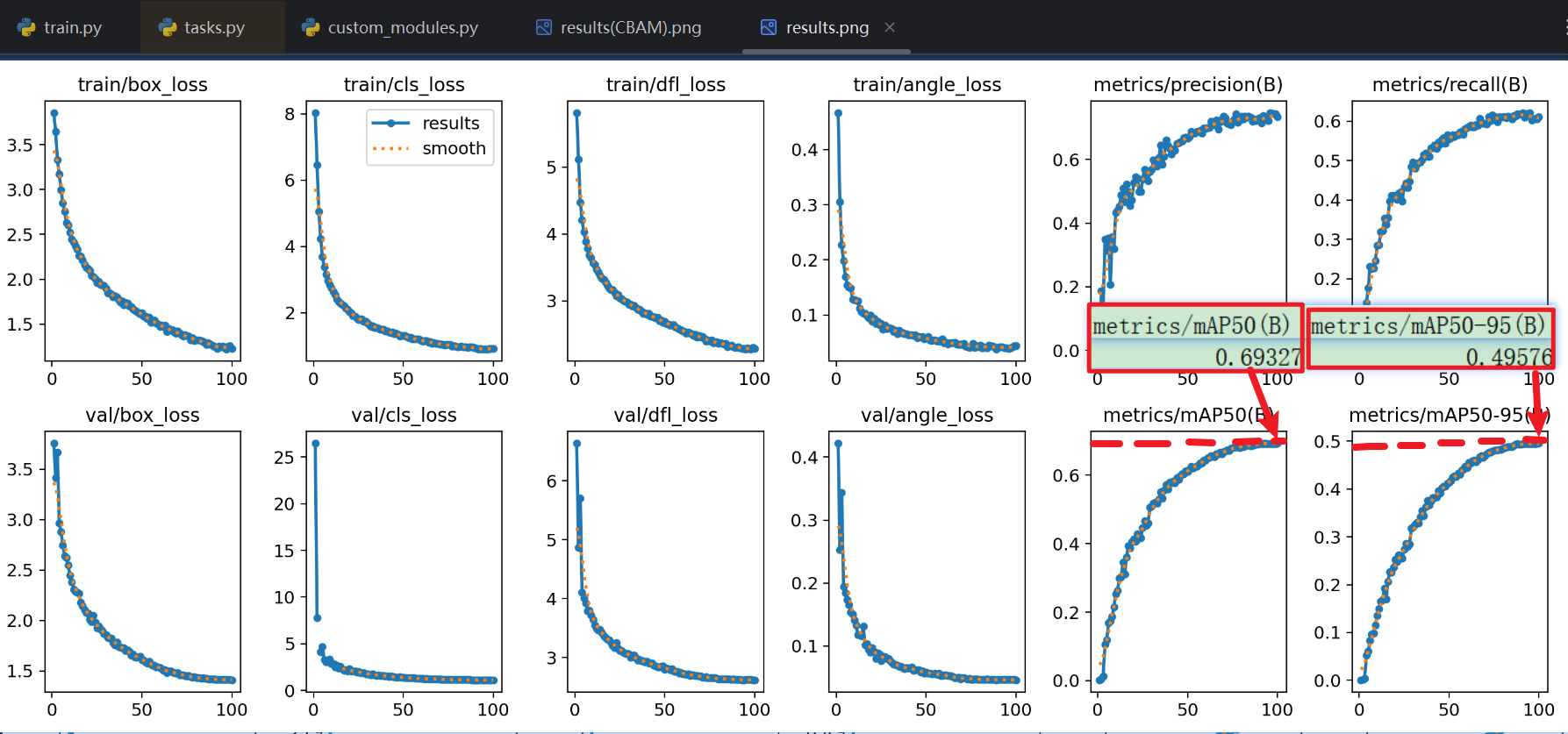

- 效果:我自己对一个数据集进行了一次【不加SE】和【加了SE】训练

- 【不加SE】得效果

- 整体效果虽然不错,但是mAP50在69%、mAP50~95在49%左右,都还不够高

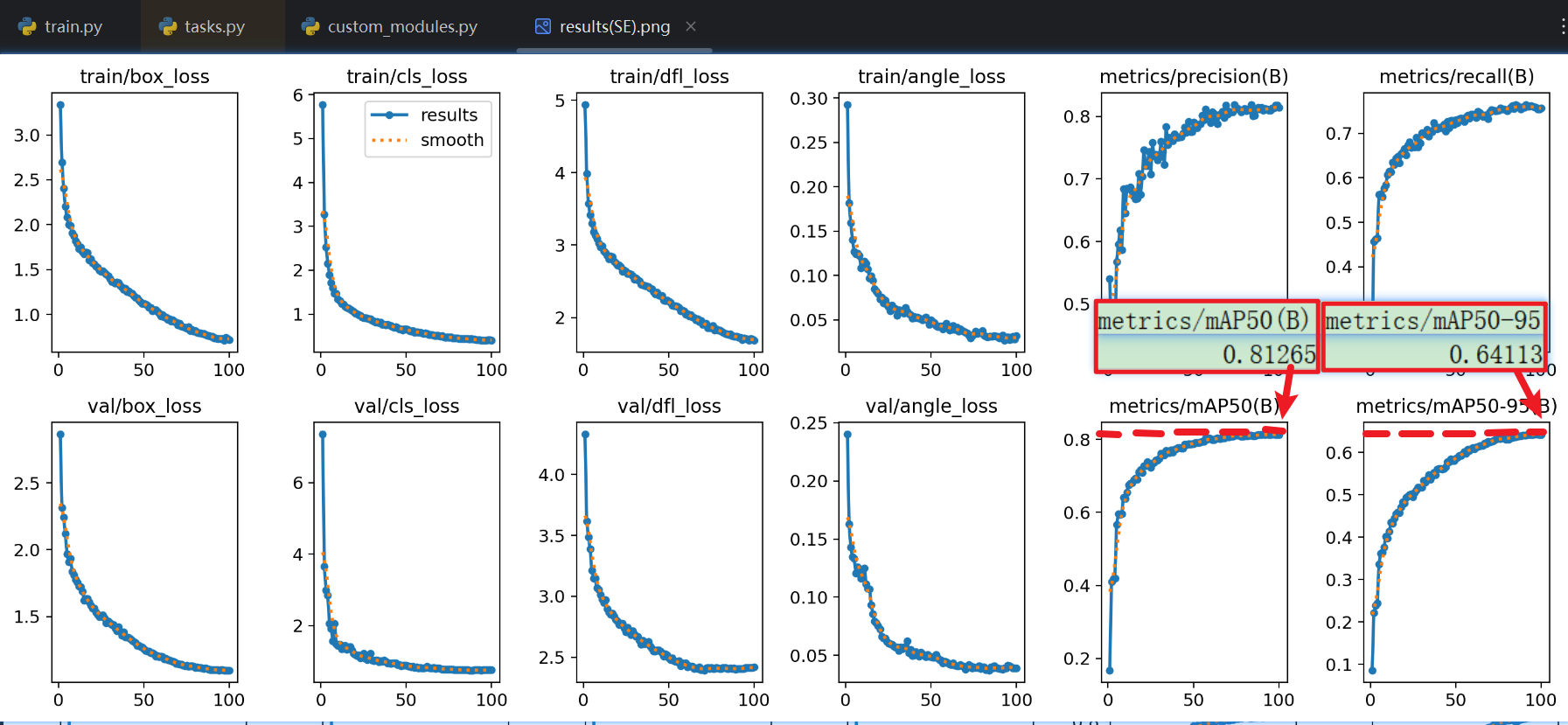

- 【加了SE】得效果

- 很顶级:明显mAP50提高了很多!!说明IoU高了,也就是预测框的定位更准

- 另外注意看 验证集的损失值 也降低了很多!!

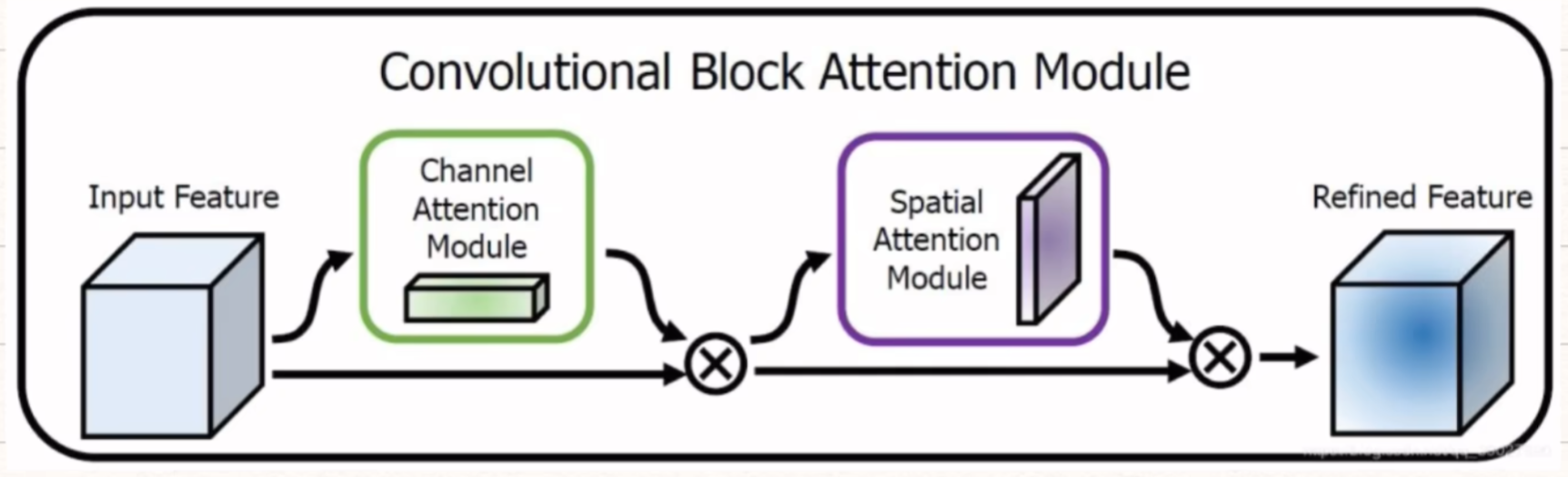

2、第二代:CBAM模块(重点,实用)

那么YOLO最常见的注意力模块就是【CBAM注意力模块】,是因为对于微小目标检测,它更轻量、即插即用,专门强化小目标特征!!!

CBAM 的全称是 Convolutional Block Attention Module(卷积块注意力模块),是专门为卷积网络设计的轻量注意力模块(算力消耗低,适合大部分电脑)。

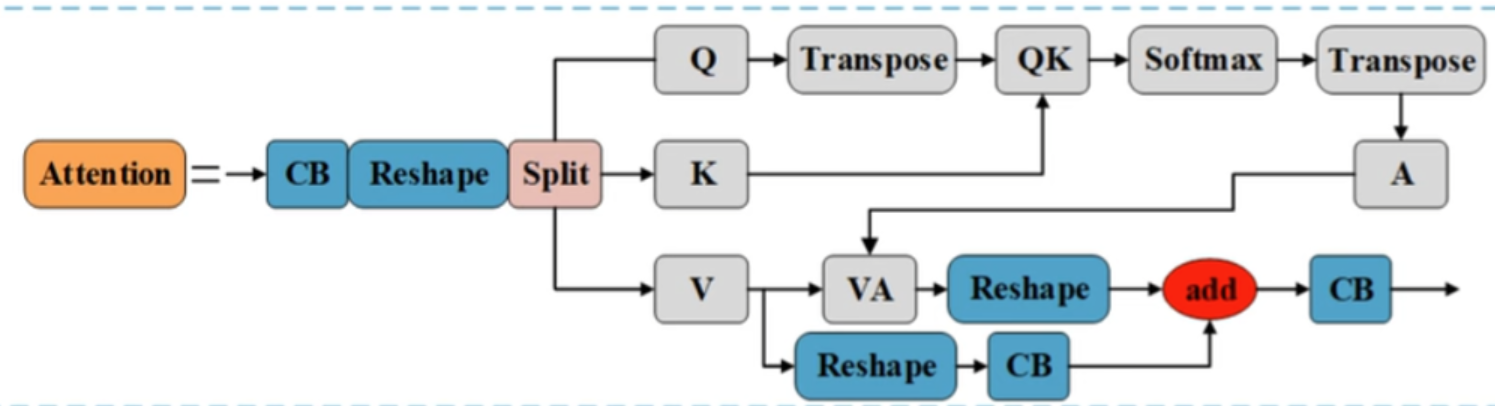

它的核心逻辑是:从两个维度给特征 “加权”—— 先选对【通道】,再选对【位置】,两步操作都针对小目标优化。

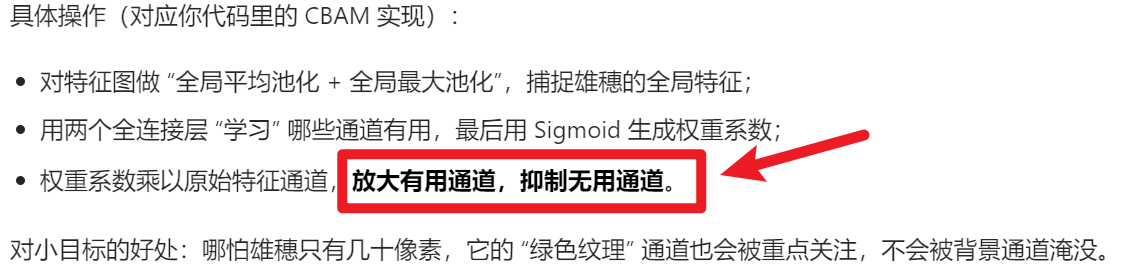

1)通道注意力(Channel Attention)—— 选 “有用的特征通道”

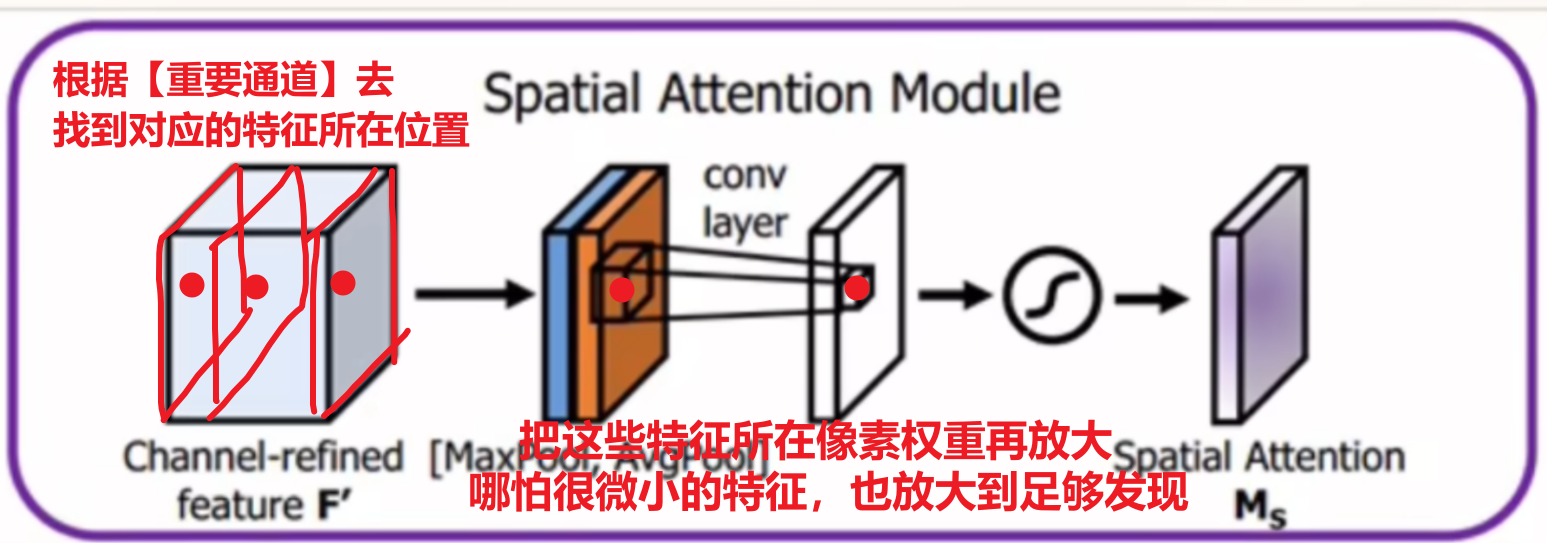

2)空间注意力(Spatial Attention)—— 选 “有用的像素位置”

还是以“玉米雄穗”为例子,经过通道注意力后,模型已经聚焦到 “绿色纹理” 这类特征,但还不知道这些纹理在图片的哪个位置(是雄穗的位置,还是叶片的位置?)

空间注意力的作用:给雄穗所在的像素位置加权重,给背景位置降权重 —— 让模型精准定位小目标的位置。

总结:

以玉米雄穗数据集为例子,大致流程就是:

- 输入特征图(抓极小雄穗) ↓

- 通道注意力:选“绿色纹理”通道 → 加权 ↓

- 空间注意力:选“雄穗位置”像素 → 加权 ↓

- 输出加权后的特征图(雄穗特征被放大,背景被抑制)

3)比【SE】优秀的原因,为什么我们要选他

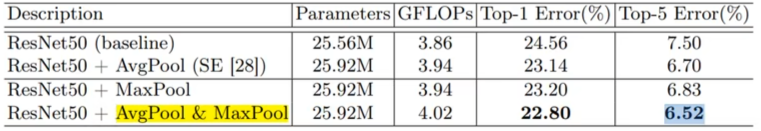

CBAM融合了 “通道注意力 + 空间注意力”,并构成了一段包含池化、全连接、卷积的代码。对于他提出的论文里也和SE做了详细对比:

- 在加入【平均池化】+【最大池化】后,对比效果最好

- 加入【通道注意力】+【空间注意力】

- 而且先【通道】再【空间】的顺序,对比效果最好

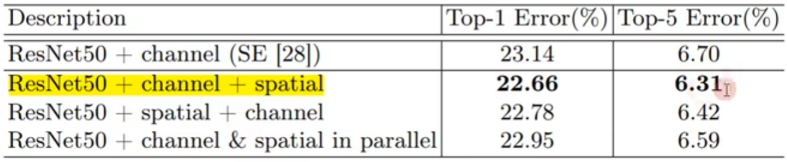

- 以及热力图效果对比

- 另外,YOLO官方还提供了拆开的【通道注意力ChannelAttention】、【空间注意力SpatialAttention】

- 效果:但是我经过亲自实验,【单独用这两个】注意力模块的效果比【整个CBAM的:先“通道” 再“空间”】的效果差!!!

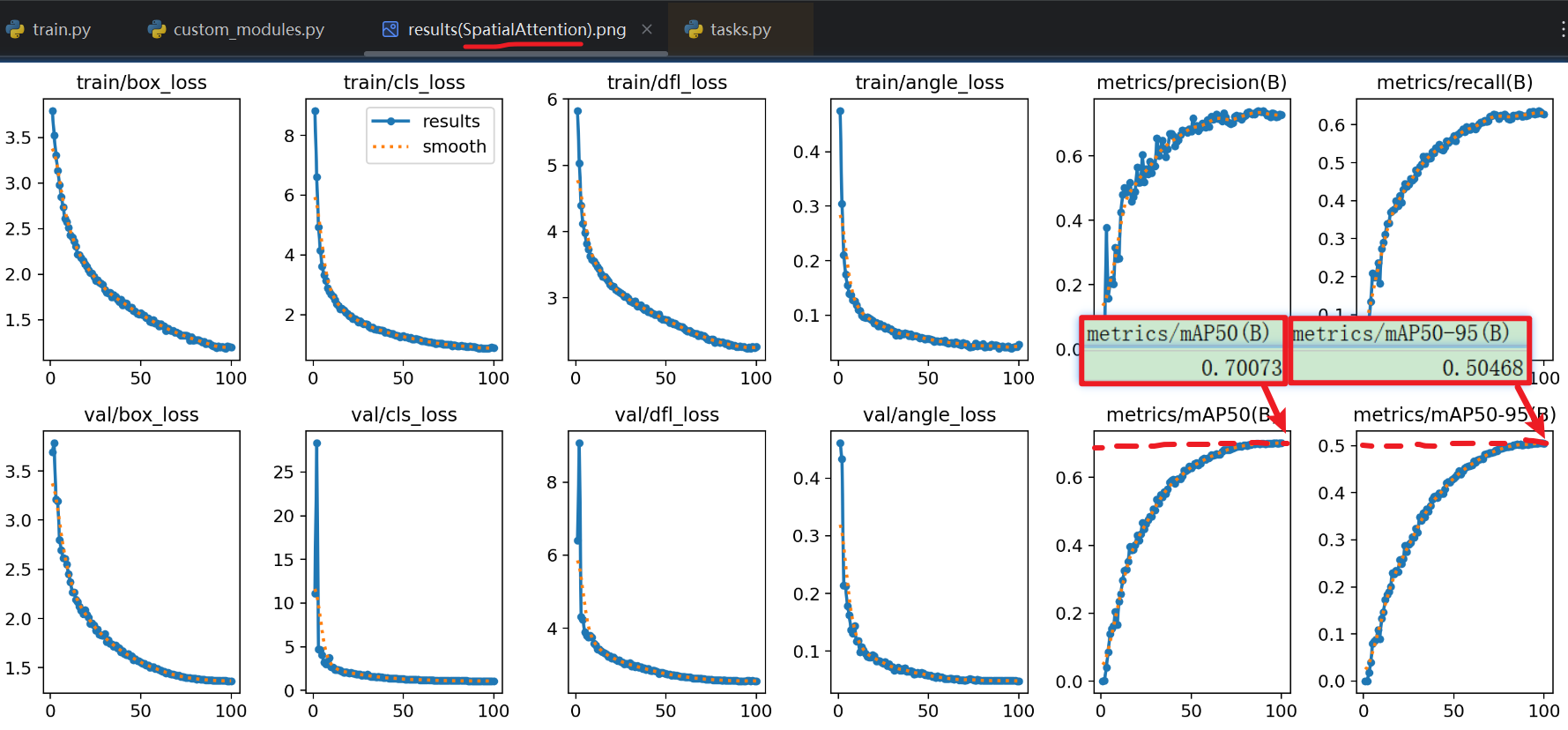

- 单独用【空间注意力SpatialAttention】的效果

- 甚至还不如【SE模块】的效果。。。。拉完了

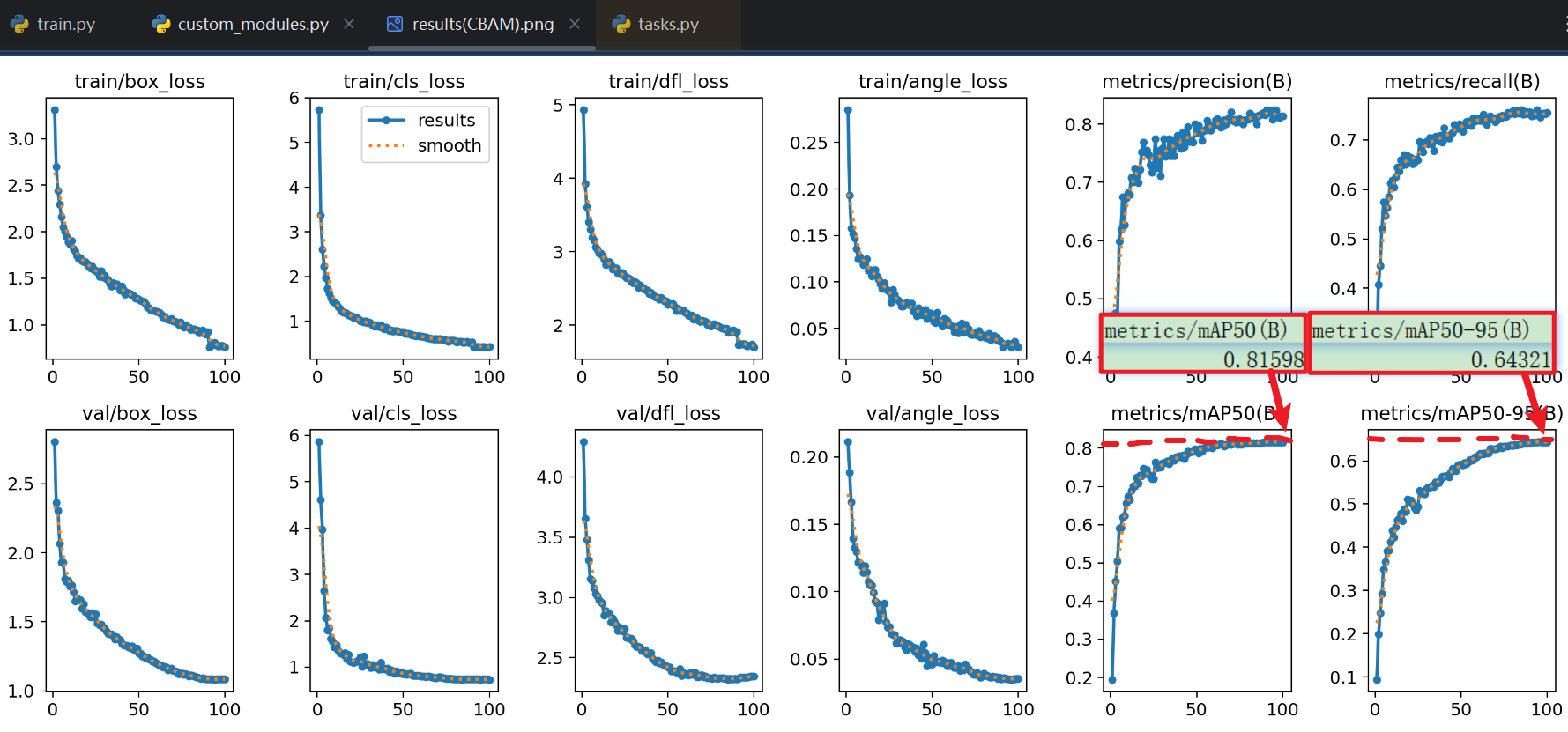

- 整个用【CBAM】的效果:

- 夯爆了:明显这次mAP比【单独用空间注意力】、或用【SE模块】得高!!!另外看损失指标也降低了很多(看图表纵坐标数据,别只看曲线)

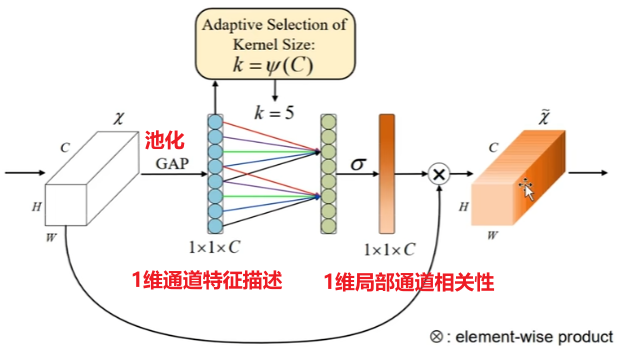

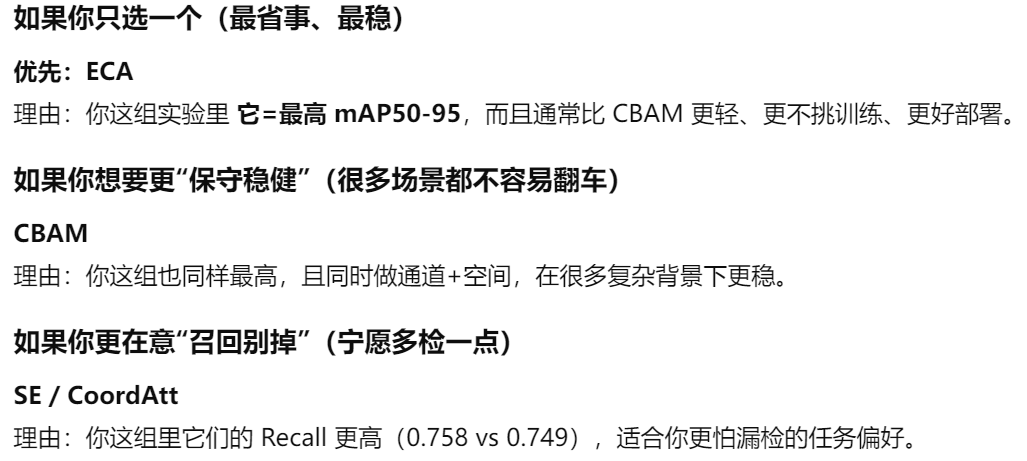

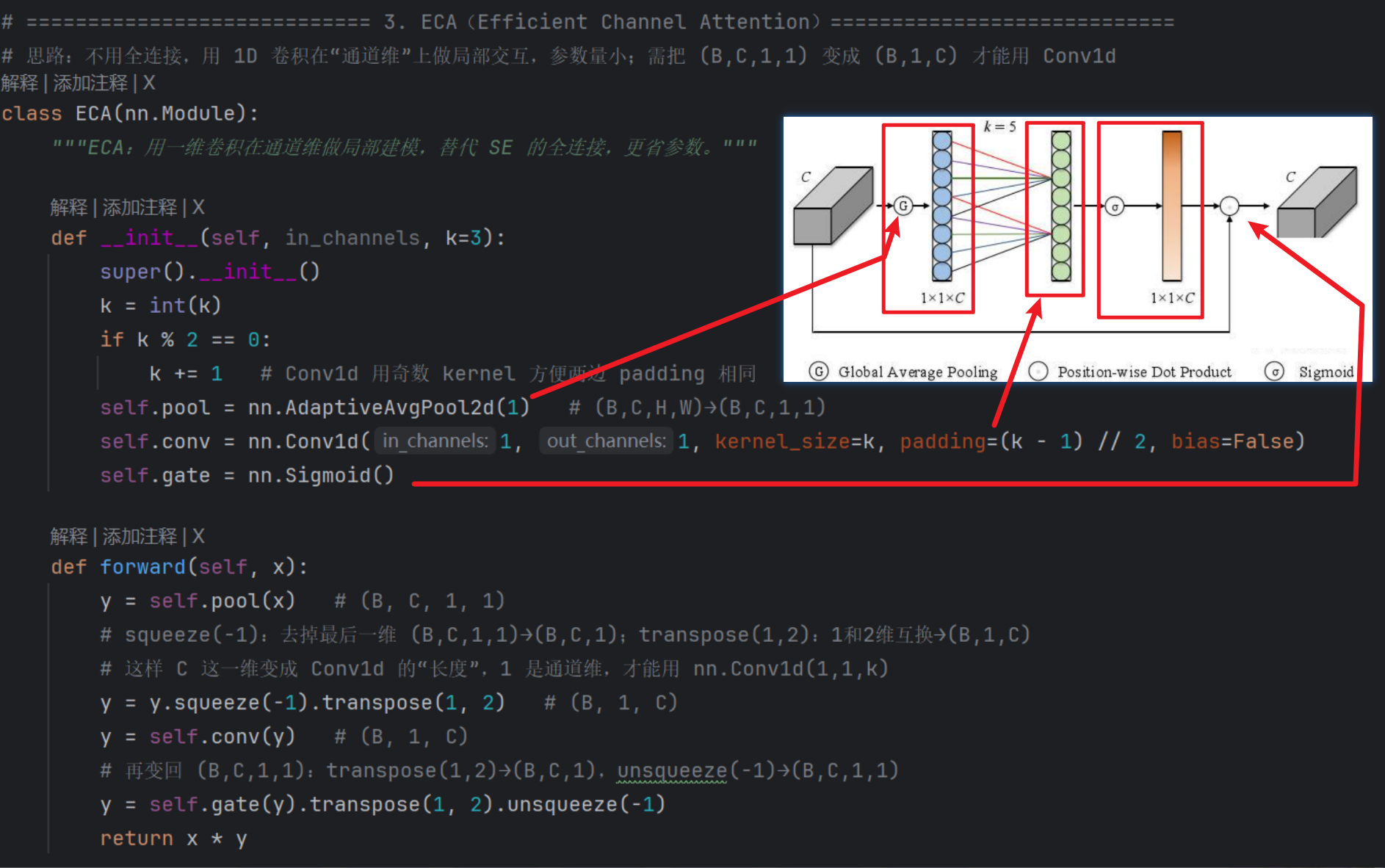

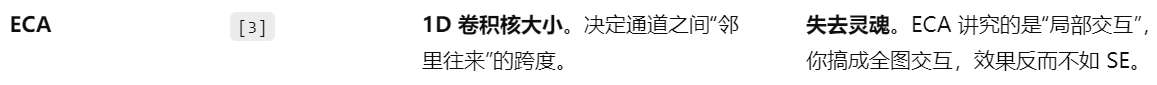

3、第三代:ECA模块

SENet中降维会给通道注意力机制带来副作用,并且捕获所有通道之间的关系效率不高,且非必要。ECA注意力机制模块直接在全局平均池化层后使用1*1卷积,去除全连接层。避免了维度锐减,有效的捕获了跨通道交互。

- 实现步骤如下:

- (1)将输入特征图经过全局平均池化,特征图从[h,w,c]的矩阵变成[1,1,c]的向量。

- (2)根据特征图的通道数计算得到自适应的一维卷积核大小kernelsize。

- (3)将kernelsize用于一维卷积中,得到对于特征图的每个通道的权重。

- 将归一化权重和原输入特征图逐通道相乘,生成加权后的特征图。

- 说白了就两个人话:

- 1、不降维

- 2、专注【局部】通道特征交互融合

- 特点:

- ✔ 更轻量

- ✔ 参数更少

- ✔ 效果接近甚至超过CBAM

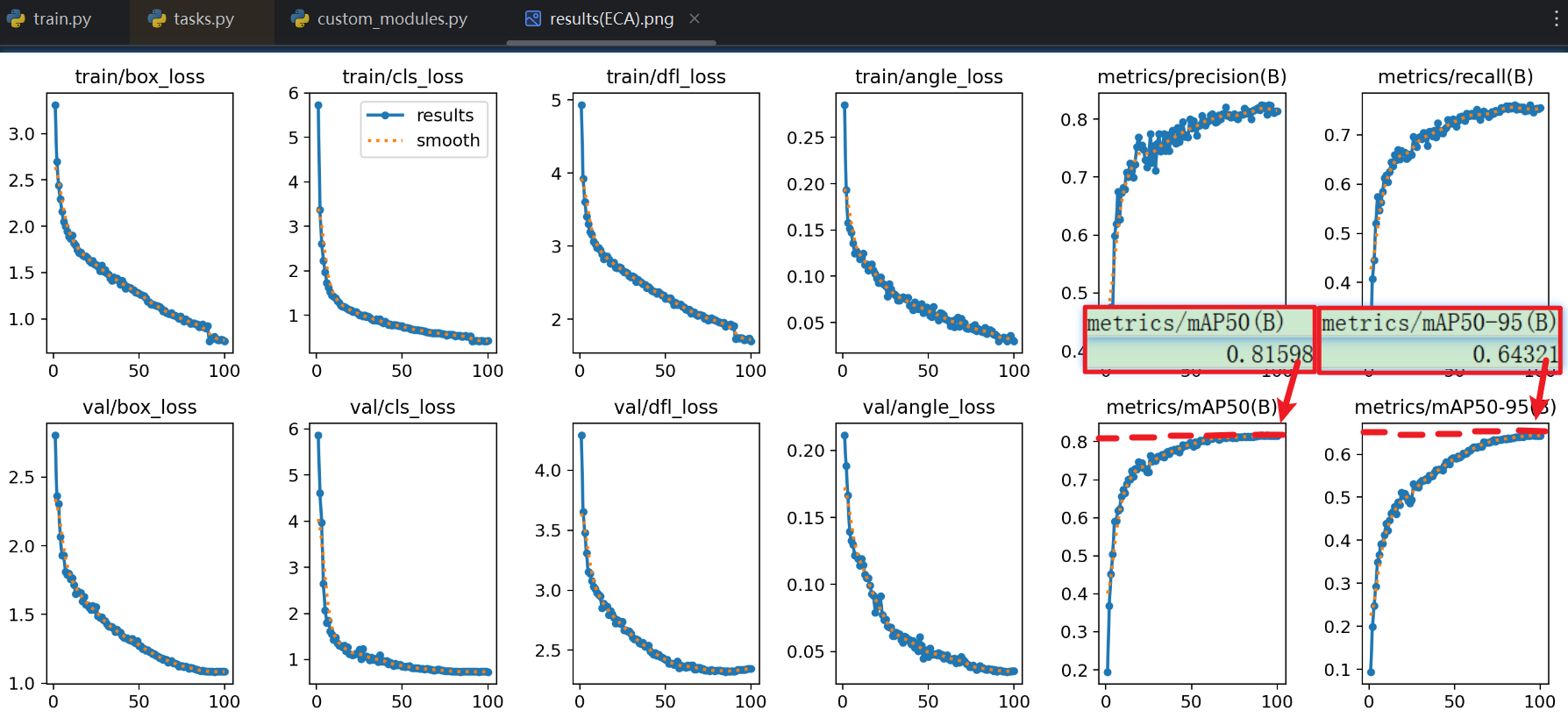

- 实际效果:

- 那么经过我换成【ECA模块】后得到的实验结果,竟然和【CBAM】一模一样,基本数据完全一样,那就说明【ECA】≈【CBAM】基本没区别

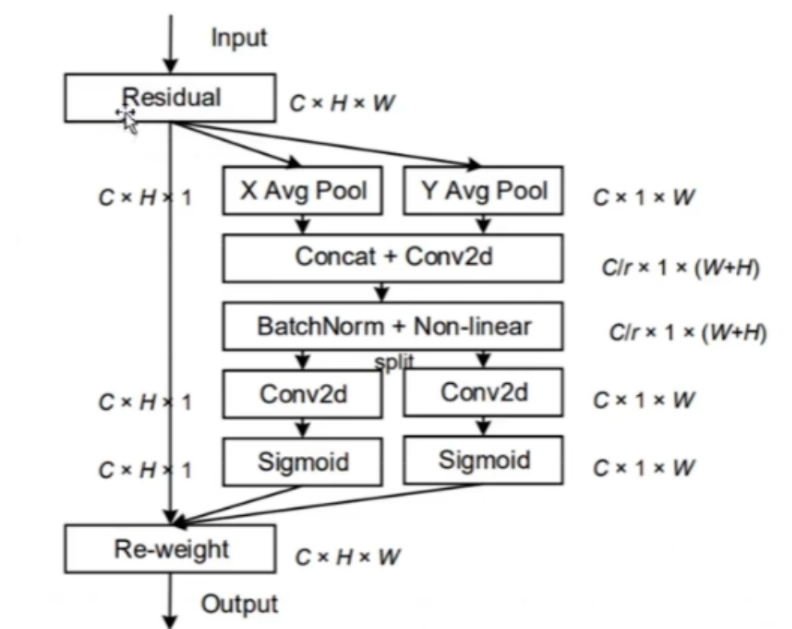

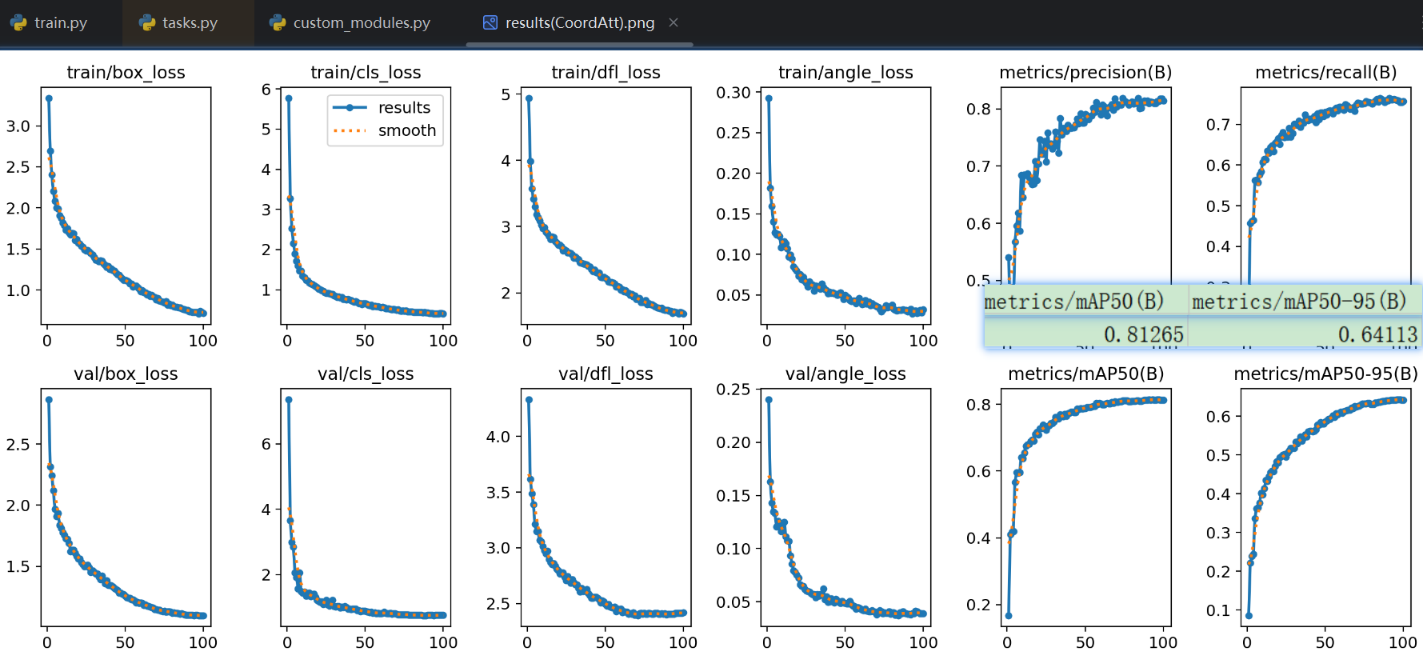

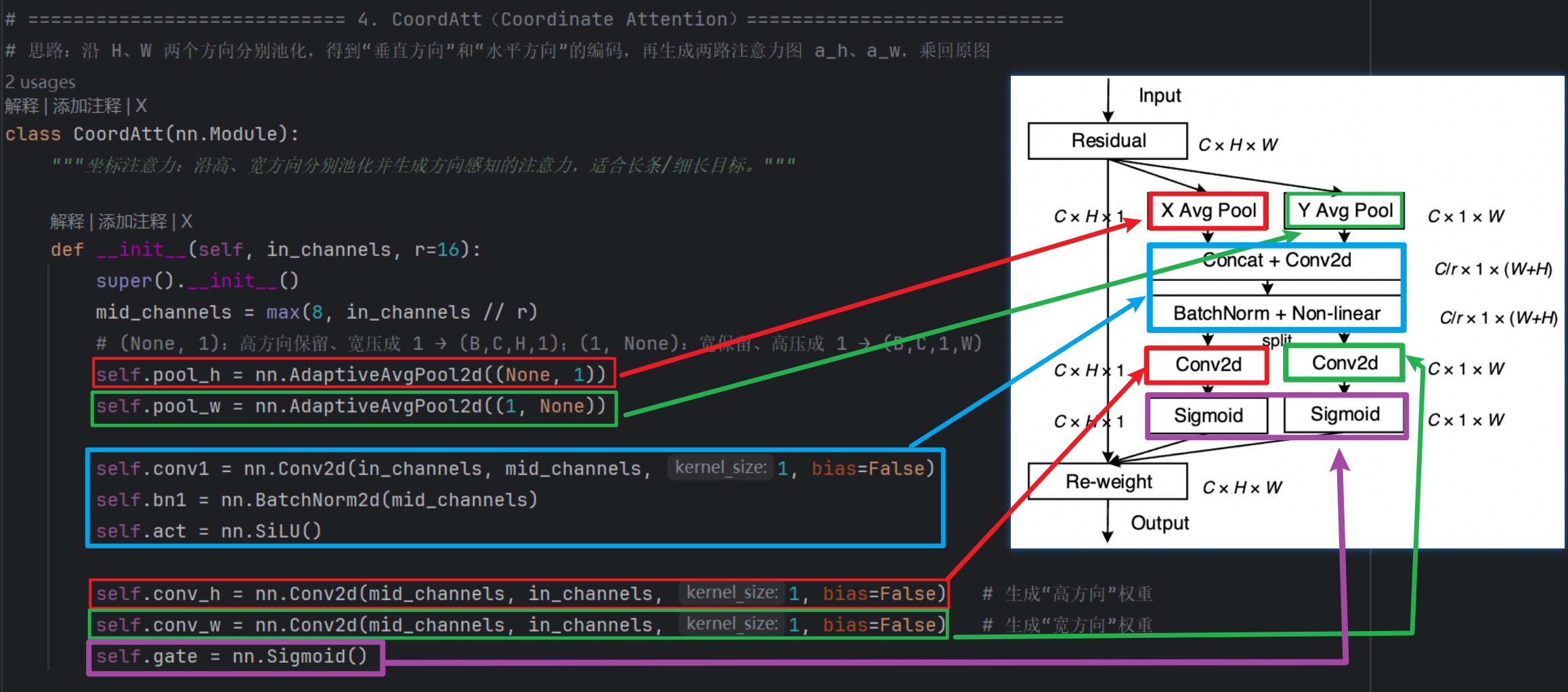

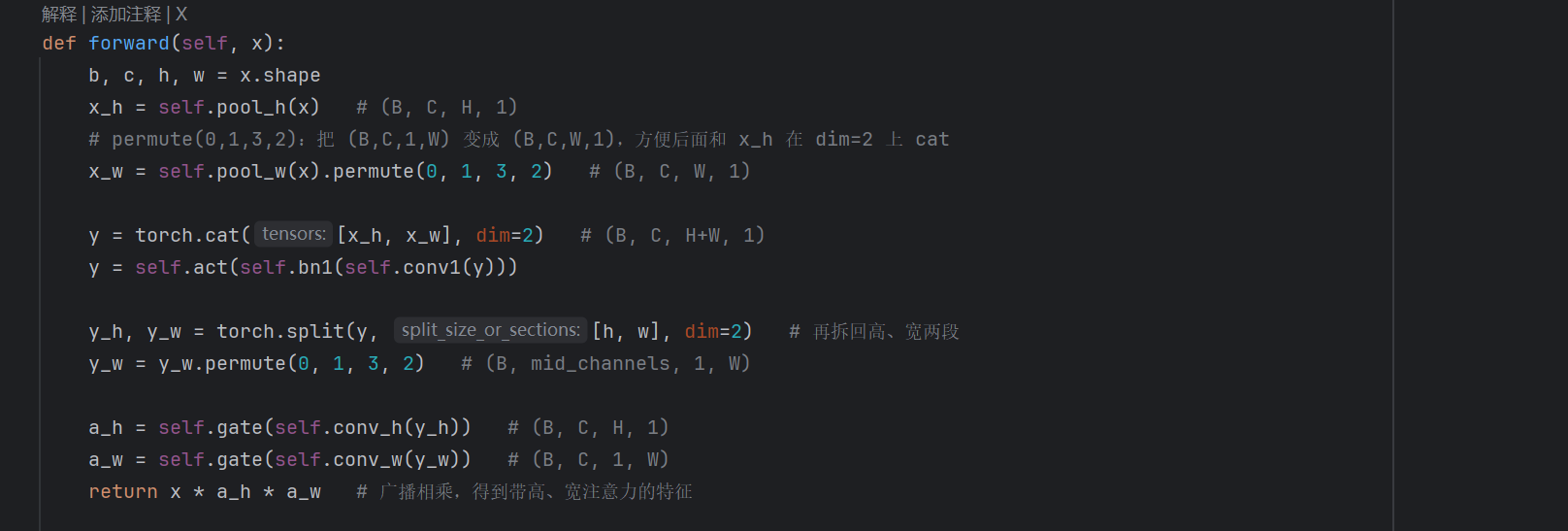

4、第四代:Coordinate Attention模块

现有的注意力机制(如CBAM、SE)在求取通道注意力的时候,通道的处理一般是采用全局最大池化/平均池化,这样会损失掉物体的空间信息。Coordinate Attention模块作者期望在引入通道注意力机制的同时,引入空间注意力机制,作者提出直接将注意力机制将位置信息嵌入到了通道注意力中

步骤如下:

将输入特征图分别在为宽度和高度两个方向分别进行全局平均池化,分别获得在宽度和高度两个方向的特征图。

假设输入进来的特征层的形状为[C,H,W],在经过宽方向的平均池化后,获得的特征层shape为[C,H,1],此时我们将特征映射到了高维度上;

在经过高方向的平均池化后,获得的特征层shape为[C,1,W],此时我们将特征映射到了宽维度上。

然后将两个并行阶段合并,将宽和高转置到同一个维度,然后进行堆叠,将宽高特征合并在一起,此时我们获得的特征层为:[C,1,H+W],利用卷积+标准化+激活函数获得特征。

之后再次分开为两个并行阶段,再将宽高分开成为:[C,1,H]和[C,1,W],之后进行转置。获得两个特征层[C,H,]和[C,1,w]。

然后利用1x1卷积调整通道数后取sigmoid获得宽高维度上的注意力情况。乘上原有的特征就是CA注意力机制。

- 特点:

- ✔ 结合位置信息

- ✔ 更适合检测任务

- 实际效果:

- 我个人的实验证明,效果跟【CBAM】和【ECA】没有太大区别

- 而且略微在小数级别比他两低一点,给到一个人上人吧

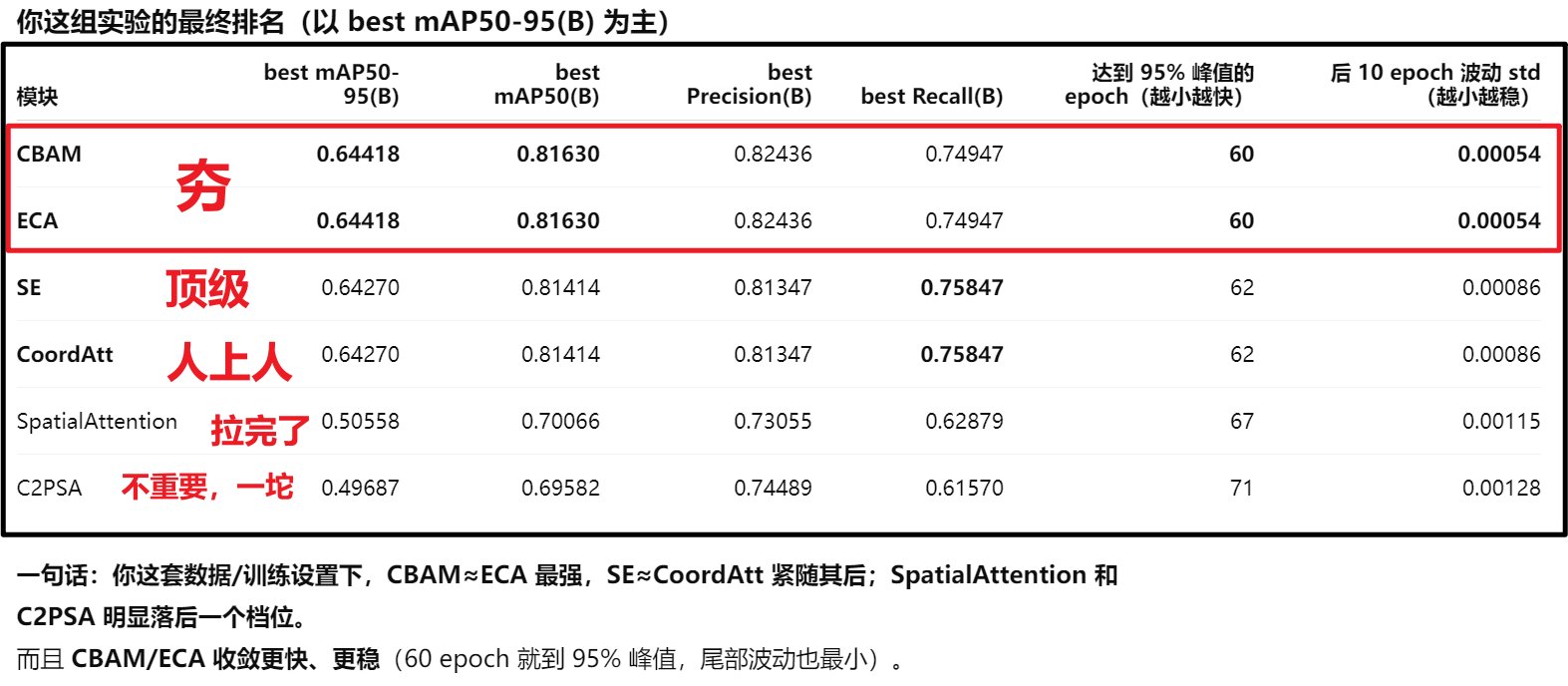

5、总结汇总

这里还有一个C2PSA,yolo加入的一个官方注意力模块,我好奇试了一下,效果就是一坨屎,后来我才知道这玩意只适用在backbone处加,而我们一般加注意力、调整结构就调head就好了,所以不推荐C2PSA,也不过多介绍它

三、代码实操

首先讲一下写一个模块的套路(不管是注意力模块、C3k2、上采样下采样...等),无非就是:

规定模板套路:

- def __init__(self, ......):初始化这个模块

- 先继承父类:super().__init__()

- 然后分别针对不同模块,创建内部每一个细节结构

- def forward(self, ......):规定前向传播的流程

- 根据前面__init__定义的结构,返回权重结果w

- 一般全连接层的流程是【嵌套函数】,比如A—>B—>C,代码就是C ( B( A( w ) ) )

然后yolo的【nn】提供的一些便捷函数:

- nn.AdaptiveAvgPool2d(要压缩成的维度):平均池化

- nn.Conv2d():创建卷积

- 一般就6个参数(输入通道, 输出通道, kernel_size, stride, padding, bias)

- - 输入通道数(输入特征图有几层“通道”)。

- - 输出通道数(卷积后得到几层通道)。

- - 卷积核大小(如 1 表示 1×1,只做通道混合、不改变 H,W;3 或 7 表示 3×3、7×7)。

- - stride:步长,默认 1(不缩小尺寸)。

- - padding:四周补零圈数,通常取 (kernel_size-1) // 2 使 H、W 不变。

- - bias:是否加偏置,True/False

- nn.Linear():全连接层(线性变换)

- 对每个样本做「输入维 → 输出维」的矩阵乘法

- 一般三个函数(输入通道, 输出通道, bias)

nn.Linear() nn.Conv2d() 输入形状 (B, in_channels),没有 H、W (B, 2, H, W),有高、宽 输出形状 (B, out_channels) (B, 1, H, W),还是 H×W 在做什么 对整根向量做线性组合(全局、无空间) 对每个位置用一个小窗口做线性组合(局部、有空间) “2 / 1” 的含义 不适用 2 个通道进 → 1 个通道 - nn.损失函数名字:调用现成写好的各种损失函数

- 例如:nn.ReLU()、nn.SiLU().......

- nn.Sequential:就是一个按顺序执行的模块容器:

- 输入依次通过第 1 个、第 2 个、第 3 个.....子模块,得到输出。不用自己写 forward。

- 例如:

out = 输入

out = 第1层(out)

out = 第2层(out)

out = 第3层(out)

return out

- nn.Sequential = “把好几层按顺序串起来,当一个小网络用”;

1、SEnet模块

1)导入【SE】模块

由于yolo11官方代码没有写SE模块,不知道是觉得没用废除了还是为啥,这里我们只能自己手写一个,记住SE的结构:

- 1、全局平均池化:把每个通道C对应的2维数据用平均数压缩成一个1维数字

- 2、2个卷积夹一个损失函数:fc1 —> 损失函数 —> fc2

- 注意损失函数可以是ReLU也可以是SiLU,SiLU计算精度更高

- 另外注意:1×1 卷积等价于“按通道做线性变换”,大家也习惯叫 “全连接”

- 3、最后接一个Sigmoid()函数

(具体代码我会放在在最后,可以直接复制拿去直接用)

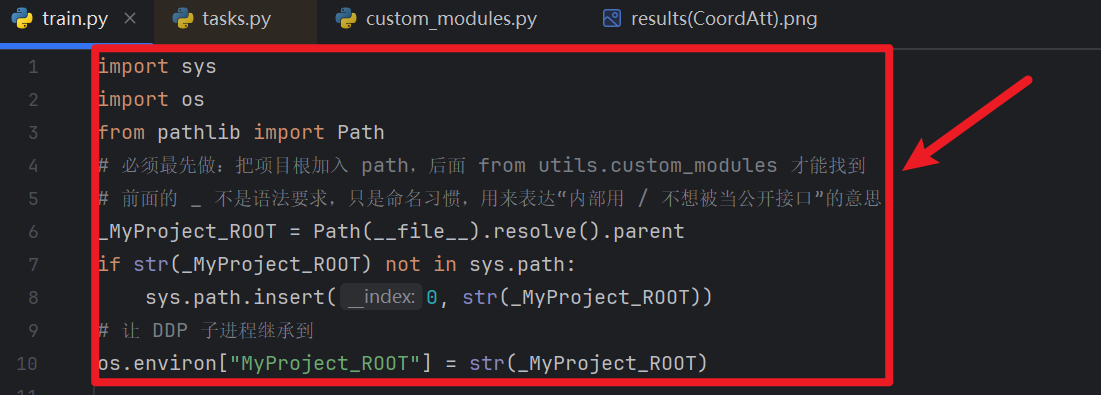

【注意:一定要做!!!!】

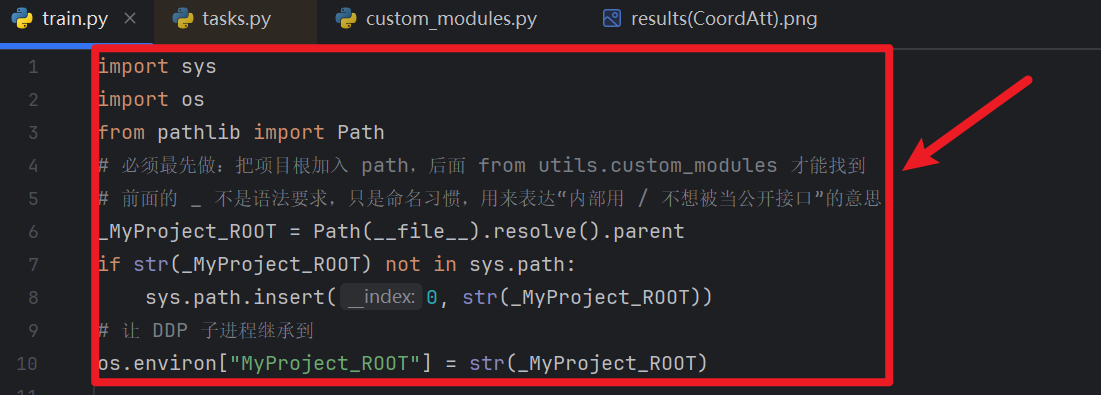

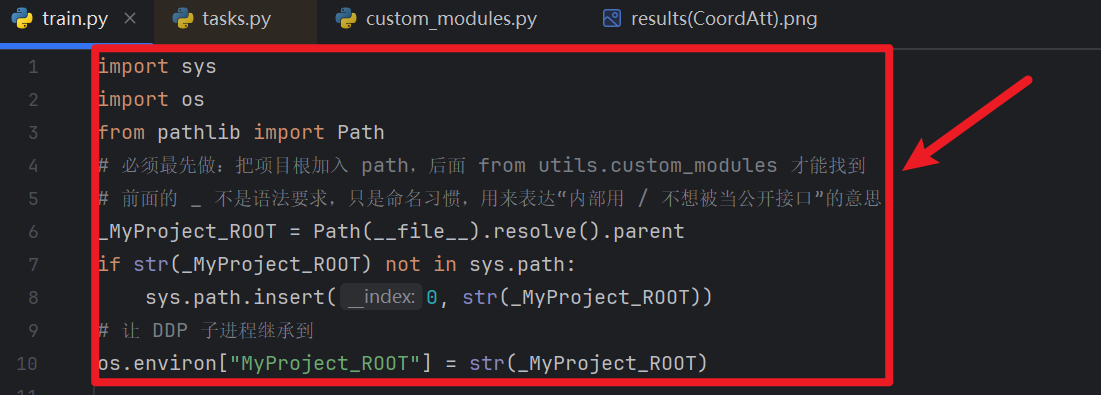

- 自定义模块还需要从【train.py】前面注入:

- 对应task.py那,你注册的当前train.py路径为全局环境变量“XXX”,然后task.py才能获取XXX的时候读到train.py路径

import sys import os from pathlib import Path # 必须最先做:把项目根加入 path,后面 from utils.custom_modules 才能找到 # 前面的 _ 不是语法要求,只是命名习惯,用来表达“内部用 / 不想被当公开接口”的意思 _你设置的全局环境变量 = Path(__file__).resolve().parent if str(_你设置的全局环境变量) not in sys.path: sys.path.insert(0, str(_你设置的全局环境变量)) # 让 DDP 子进程继承到 os.environ["你设置的全局环境变量"] = str(_你设置的全局环境变量)- task.py能读到train.py后,再把我们自定义的模块注入

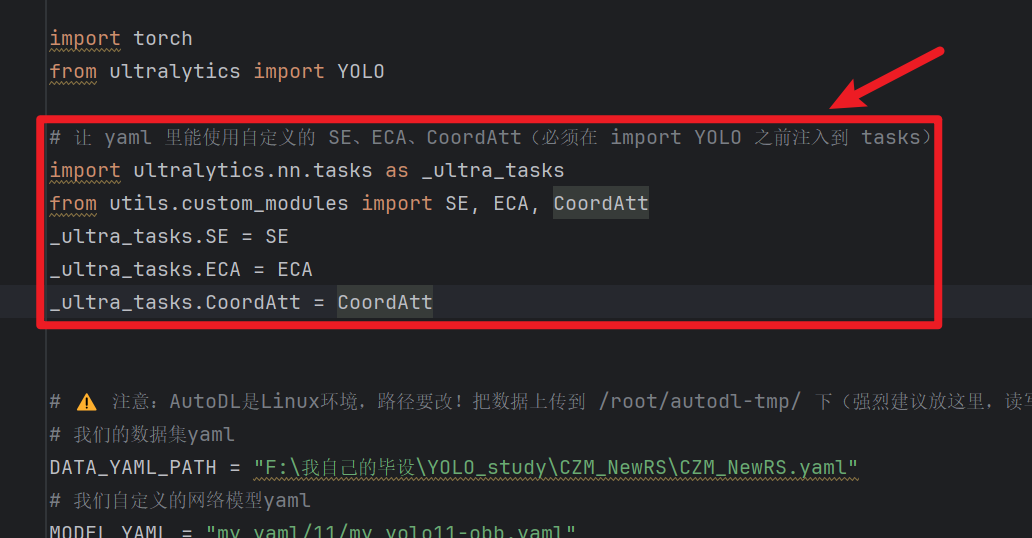

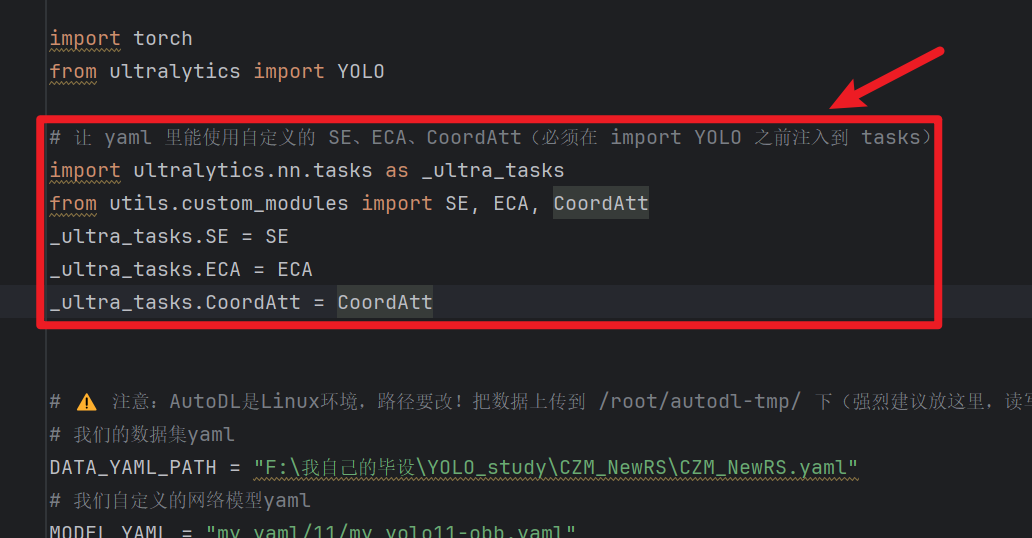

# 让 yaml 里能使用自定义的 SE、ECA、CoordAtt(必须在 import YOLO 之前注入到 tasks) import ultralytics.nn.tasks as _ultra_tasks from utils.custom_modules import SE, ECA, CoordAtt _ultra_tasks.SE = SE _ultra_tasks.ECA = ECA _ultra_tasks.CoordAtt = CoordAtt

2)yaml网络结构插入

提醒:【注意力模块】不改变通道数!!!!!!!

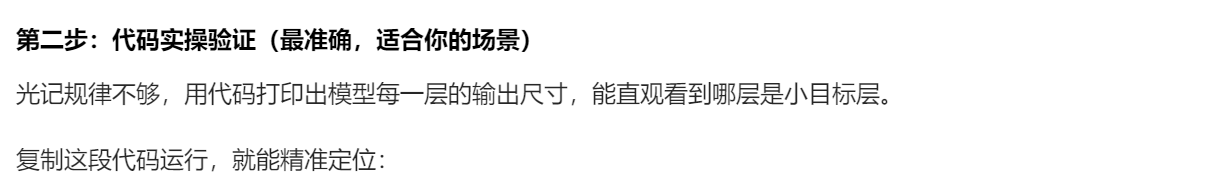

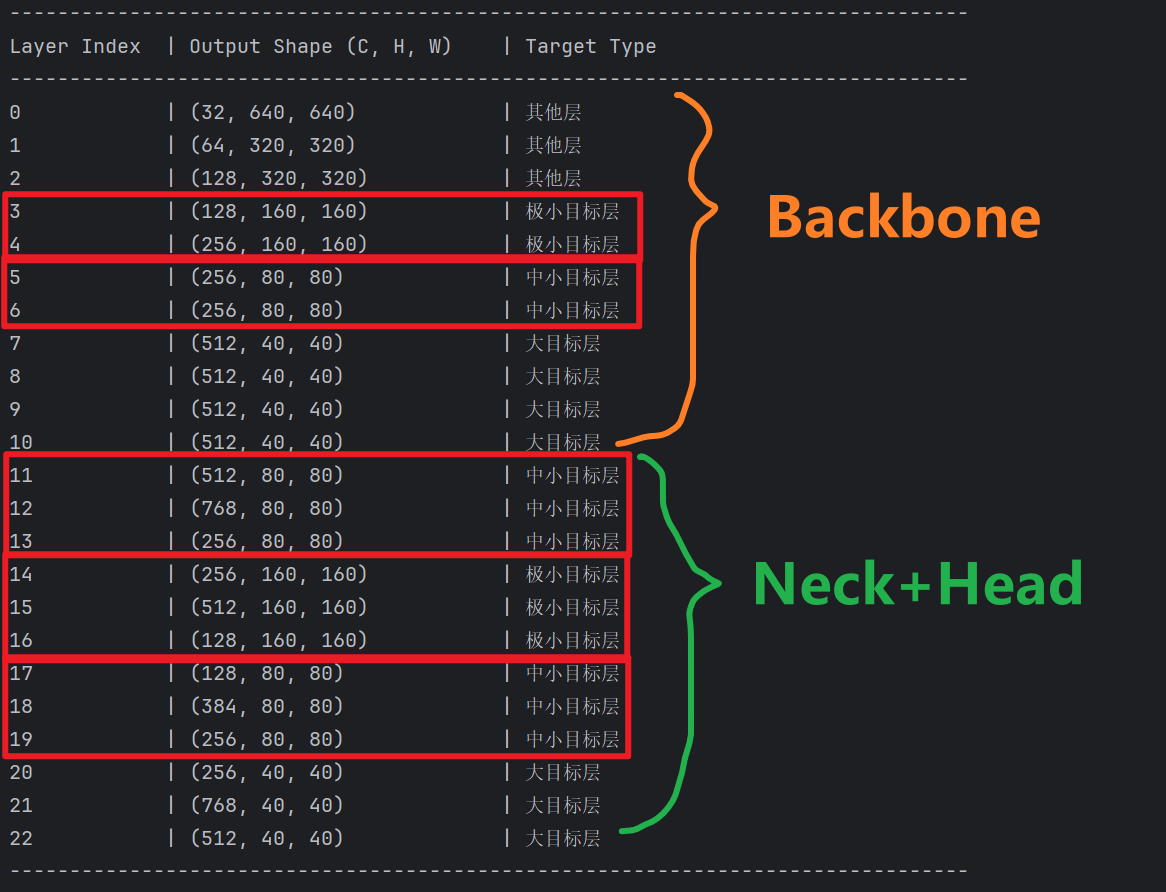

那么我们应该插到哪?怎么插呢?

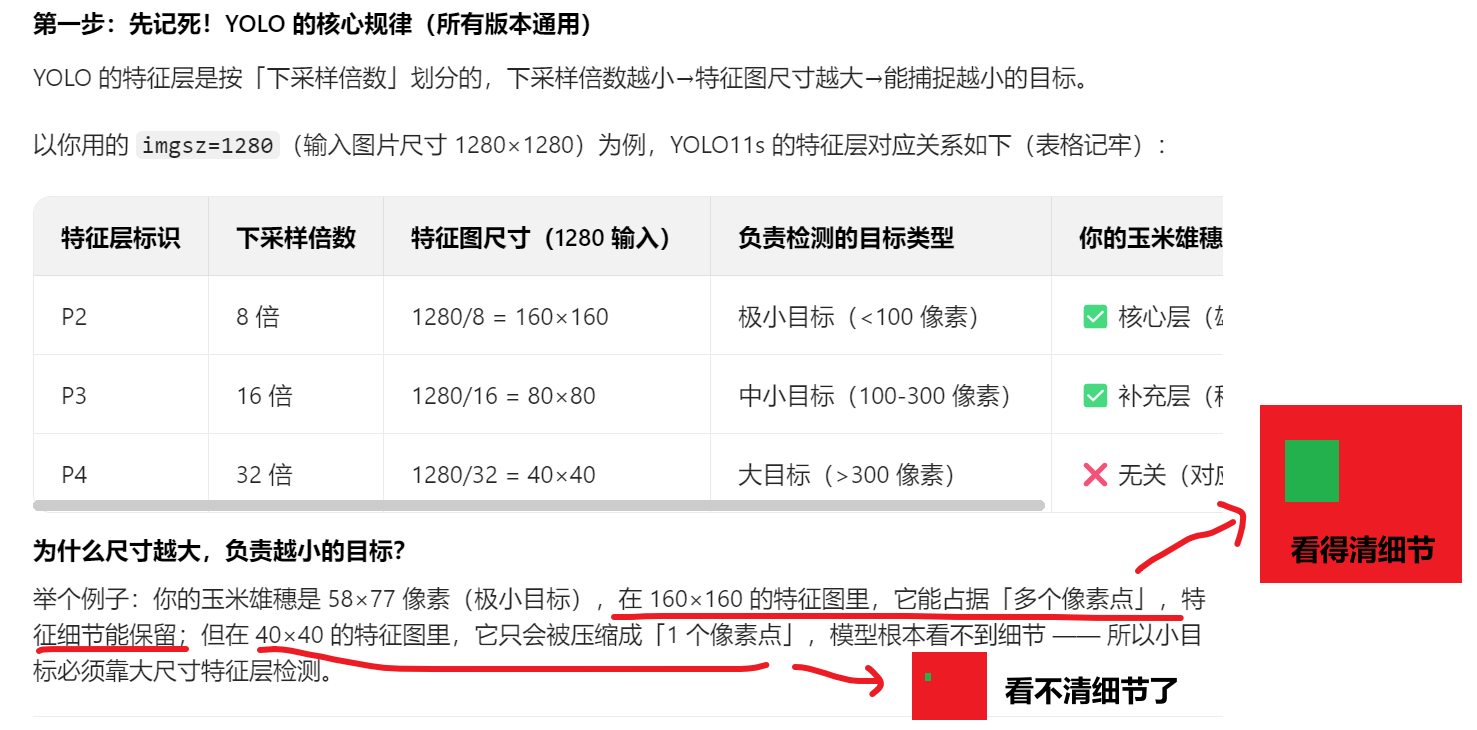

- 1、插入到【捕捉最大尺寸】检测头的【上采样 + concat + C3k2】的【前面】

- 在这插注意力,就是在 “分流” 给检测头之前,先给 “水流” 做一次 “过滤”和“加压”。如果最大尺寸层的特征被精炼了,那么下游的检测头拿到的东西质量都会变高。

- 说人话就是:我们要在最大尺寸的图片中用【注意力】捕捉细节!!!

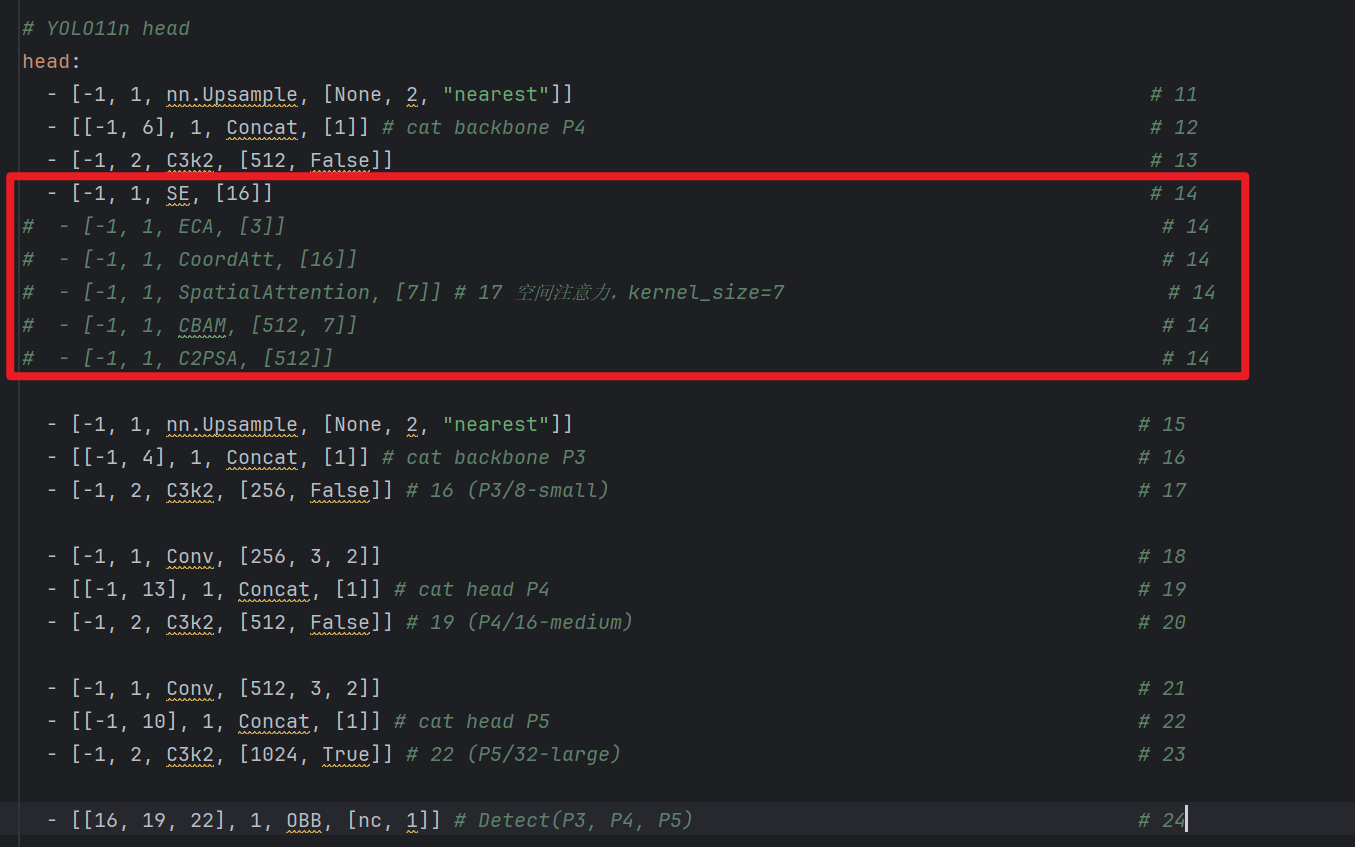

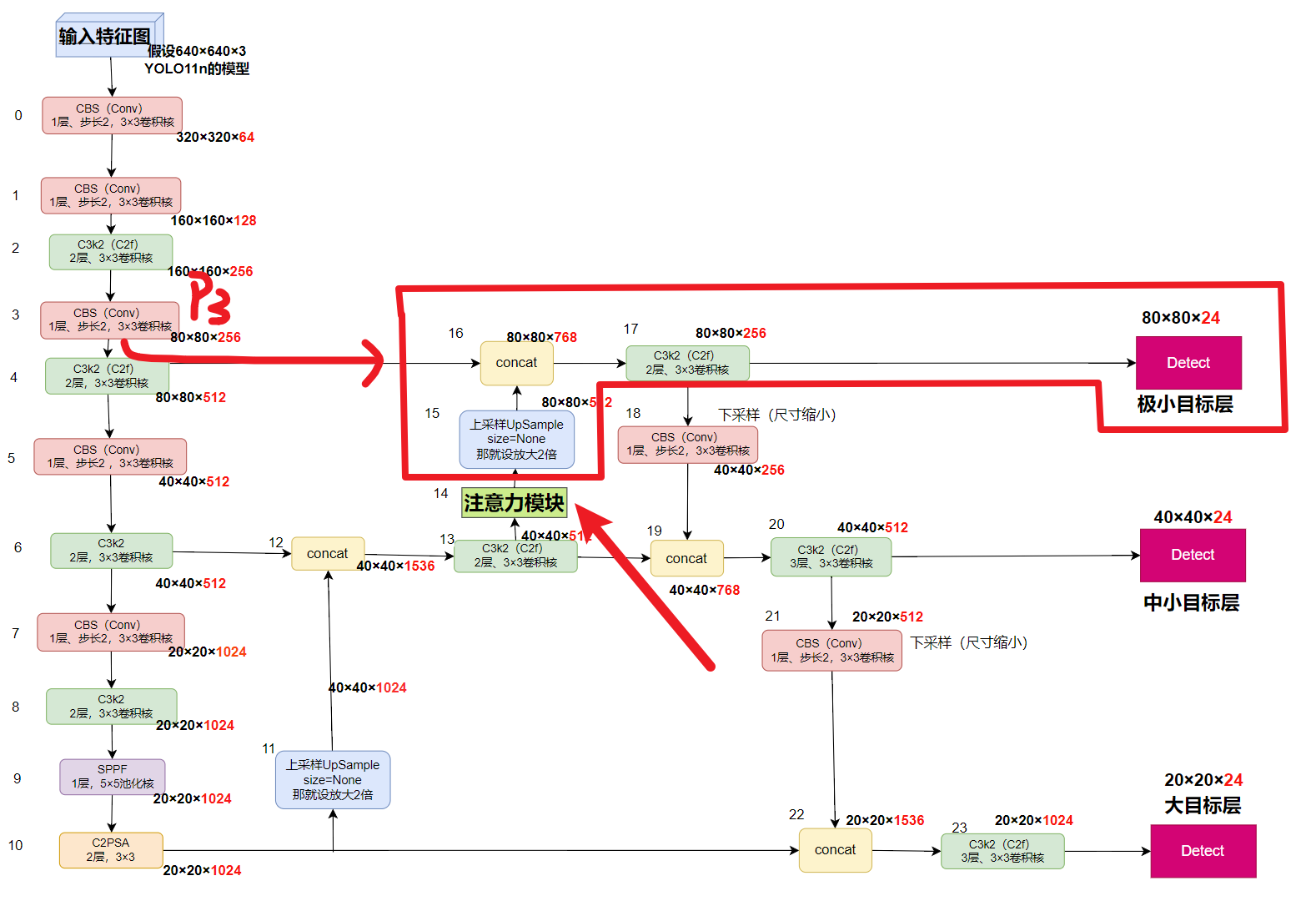

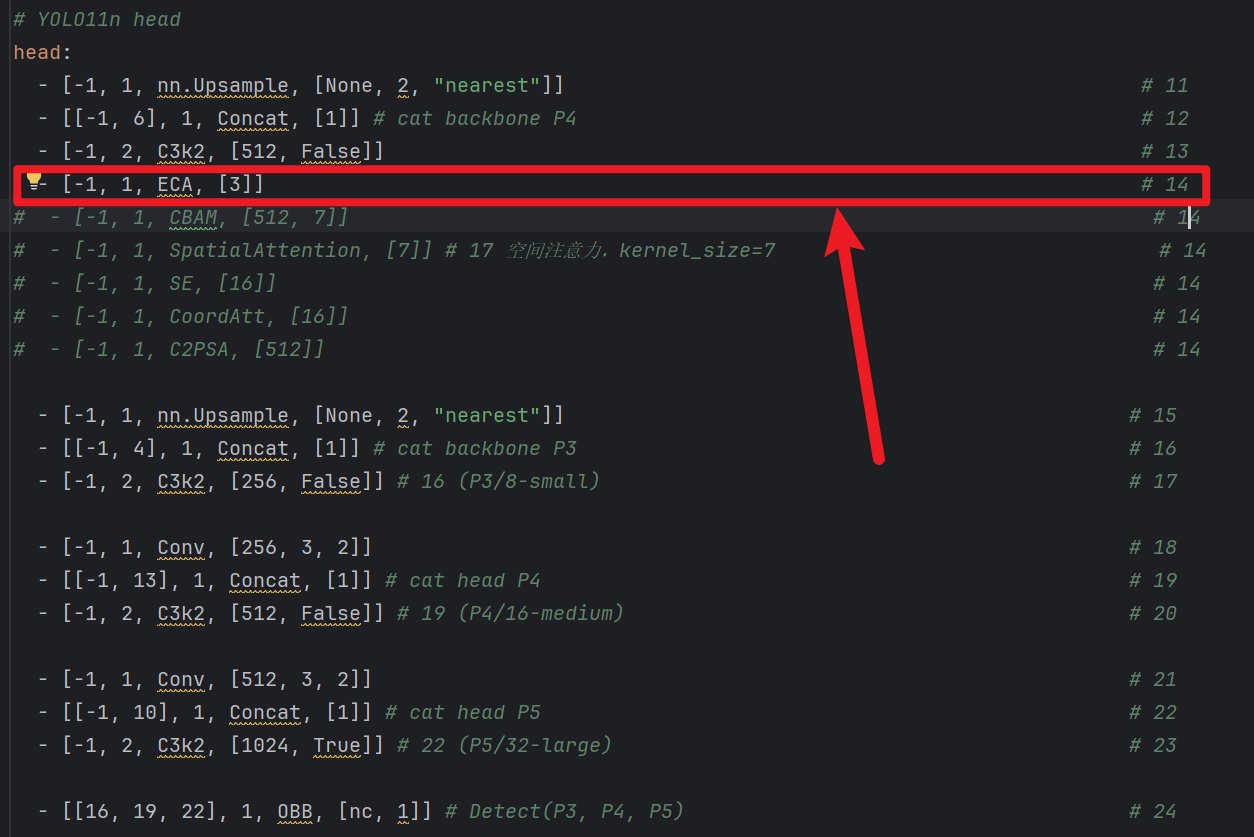

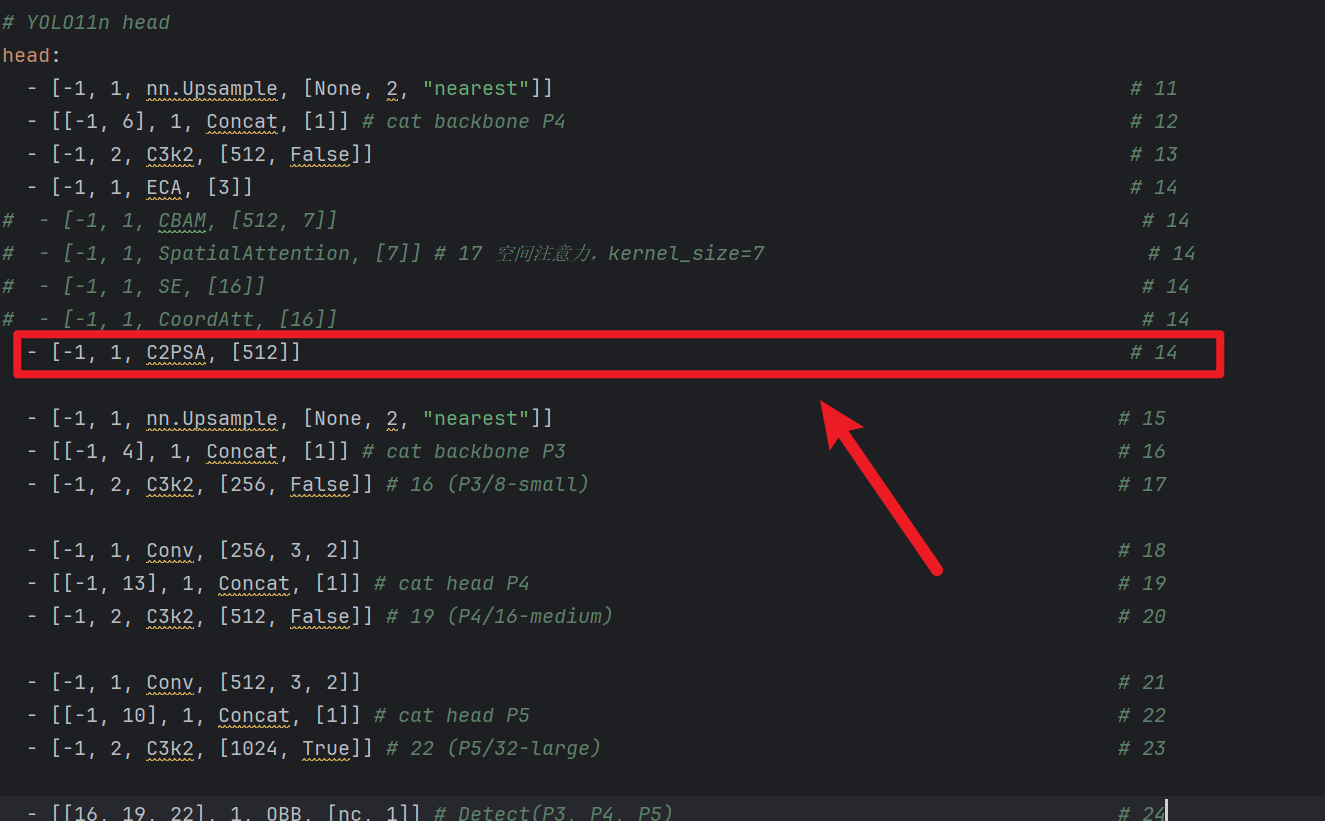

- 比如:你当前是3个检测层,那就插入到P3的【上采样 + concat + C3k2】的【前面】(就是第14层)

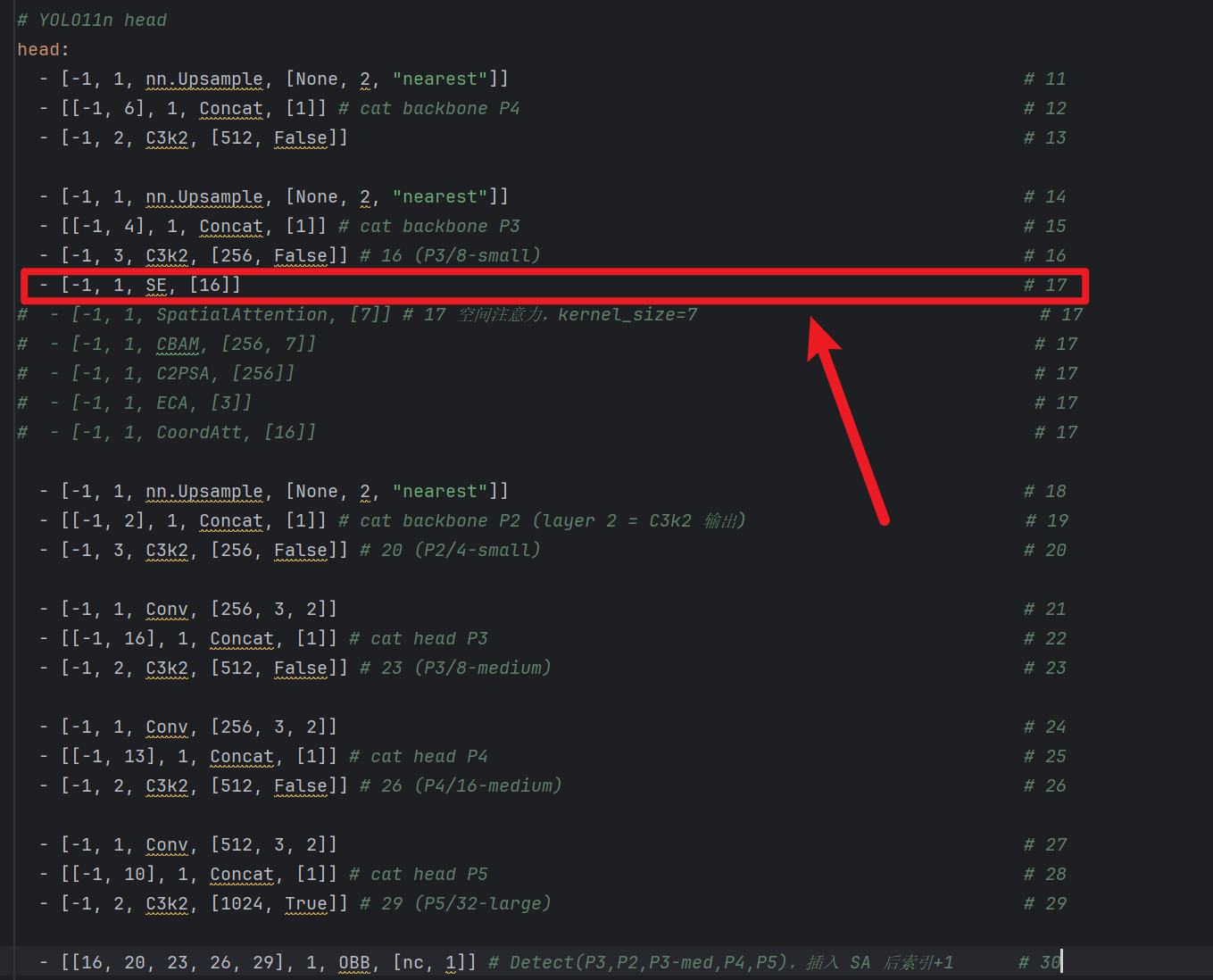

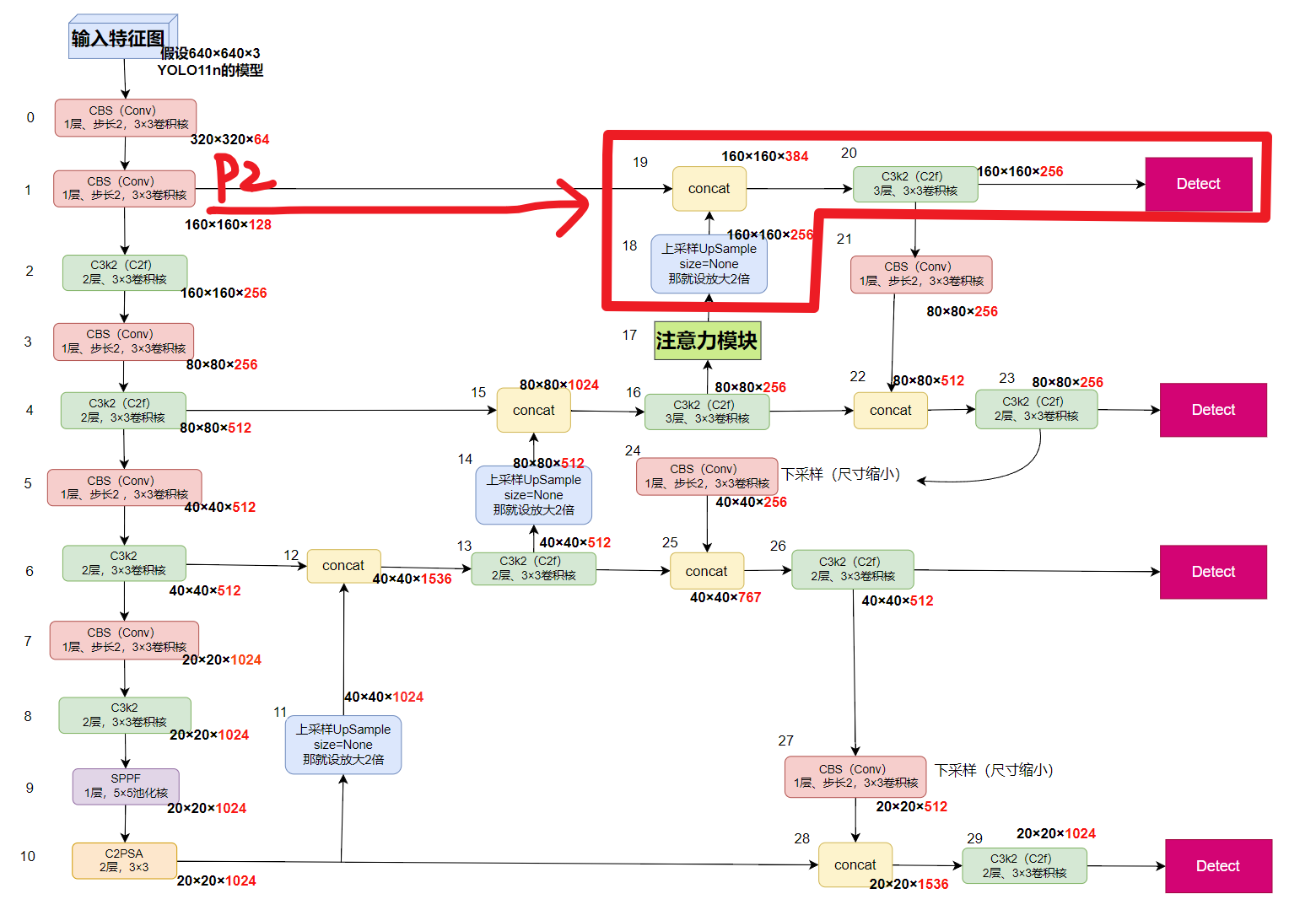

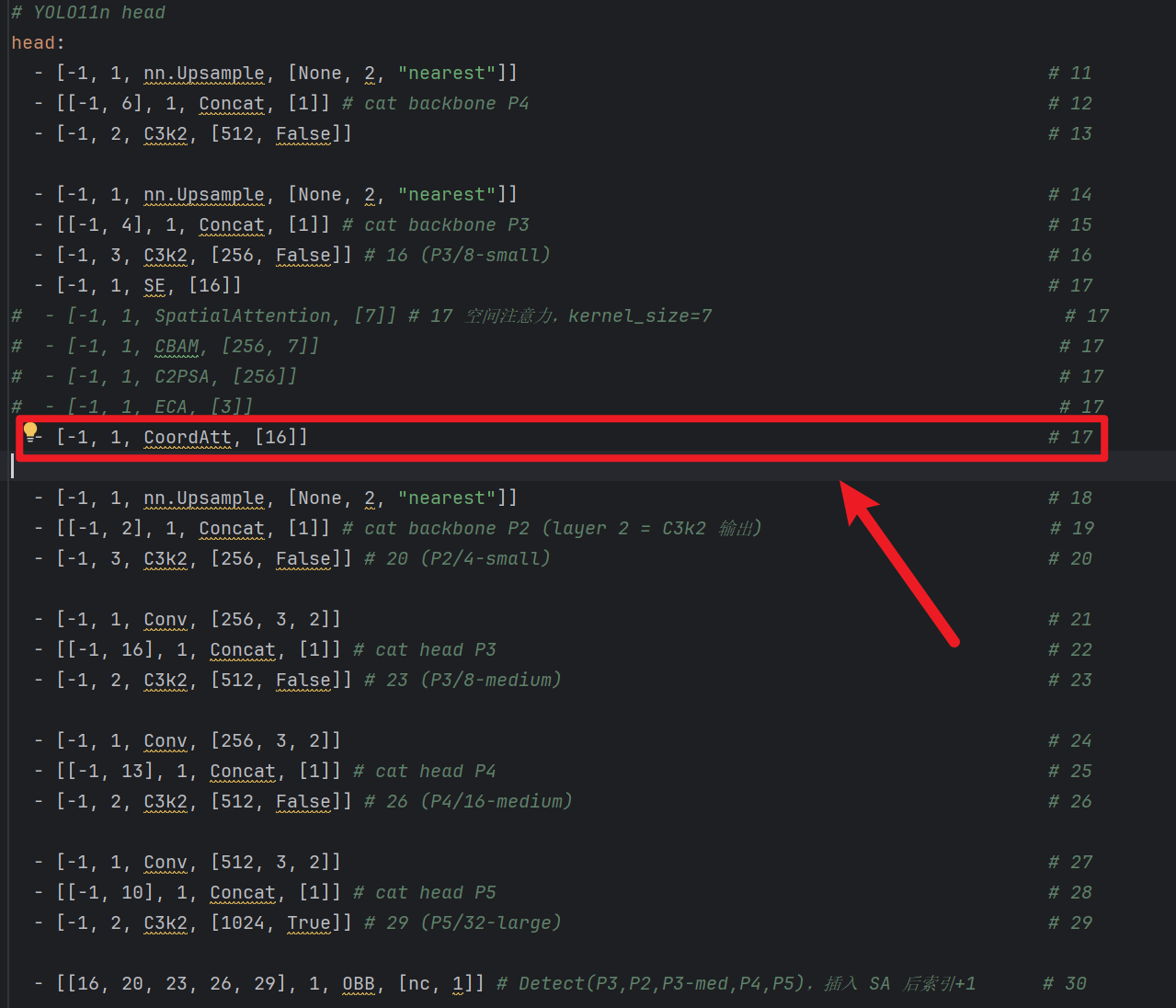

- 比如:你当前是4个检测层,那就插入到P2的【上采样 + concat + C3k2】的【前面】(就是第17层)

- 2、SE后面只用填一个参数:[16]

- 代表的是【压缩比:reduction=16】,不用管为什么,这就是默认的

2、CBAM模块

因为YOLO官方有相关代码,只不过没有引入,所以我们可以用官方的代码引入、也可以自己写,两种方式使用!

1)导入【CBAM】模块

【直接用官方给的CBAM模块(推荐)】

去到你的ultralytics安装路径下:

- windows本地路径:

- 去你pycharm下面点开 “外部库 / site-packages / ultralytics / nn / modules”,

- 或者在我的电脑的 “你的conda安装目录 / envs / 你当前虚拟环境目录 / Lib / site-packages / ultralytics / nn / modules”

- 如果用autodl服务器的linux系统下路径:“ / root / miniconda3 / envs / 你的虚拟环境目录 / lib / pythonx.x / site-packages / ultralytics / nn / modules”

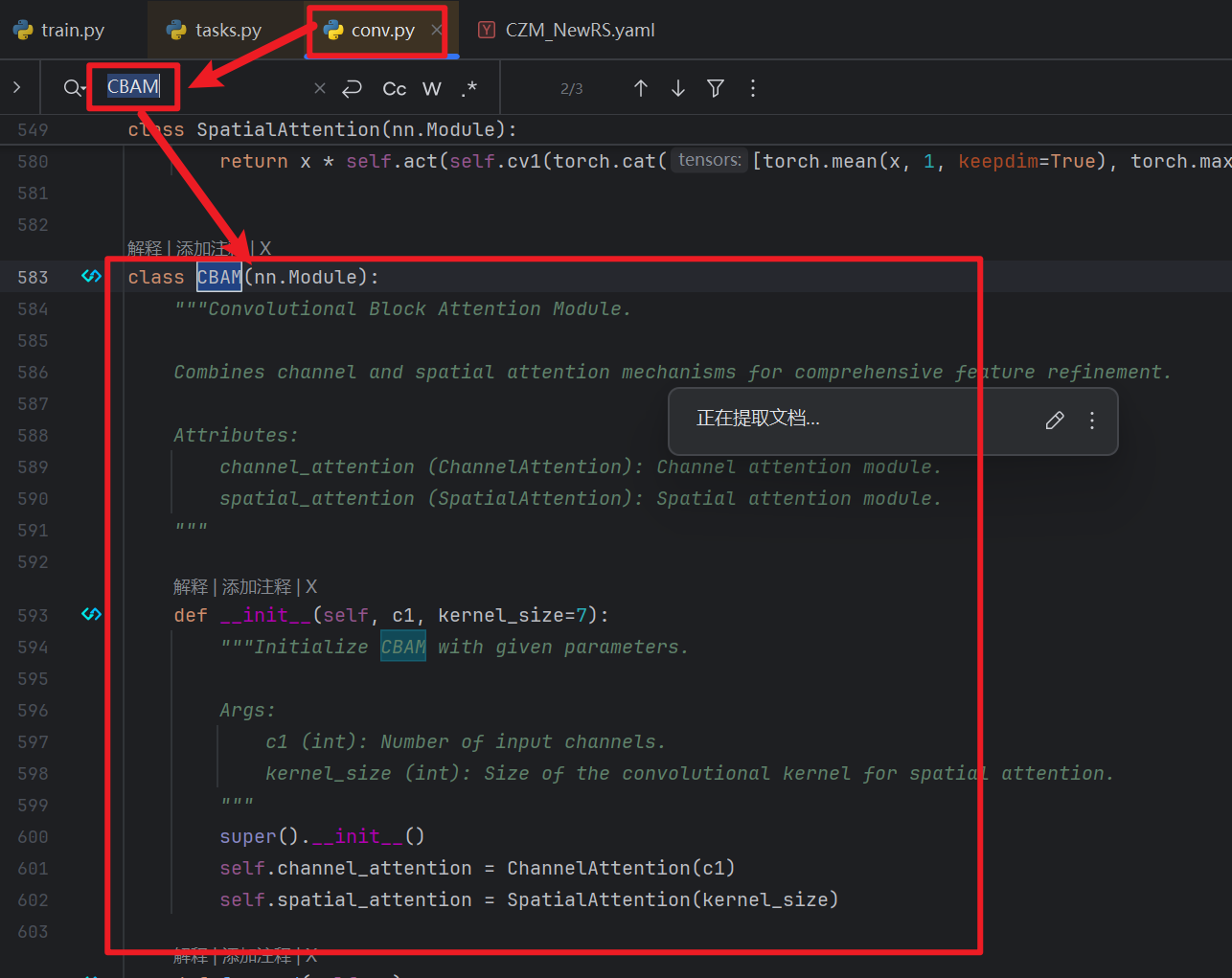

- 查找【conv.py】有无该模块

- 找到ultralytics的 nn / models目录后,可以看到一个【conv.py】文件,这个文件就是定义了各个模块,我们可以【Ctrl + F】查找一下有没有CBAM这个模块

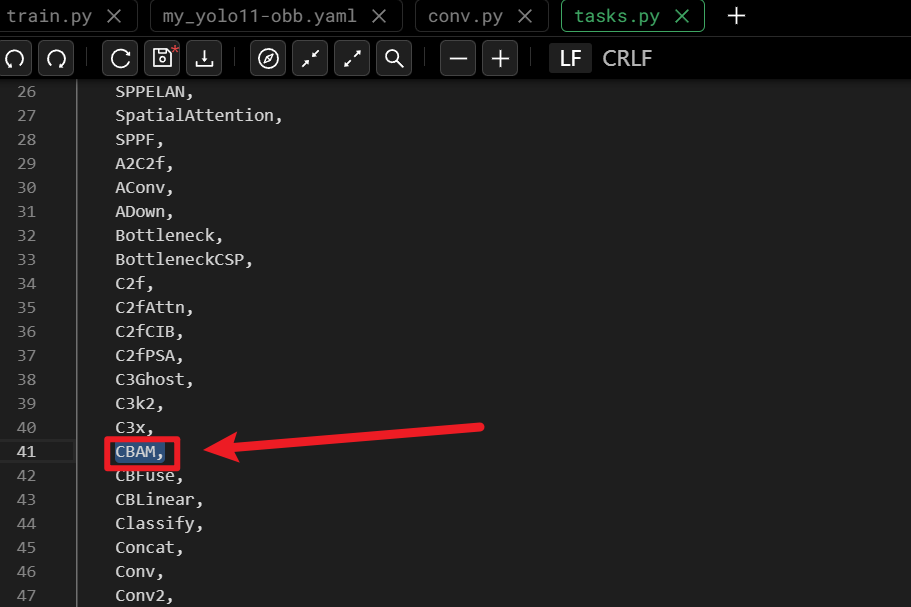

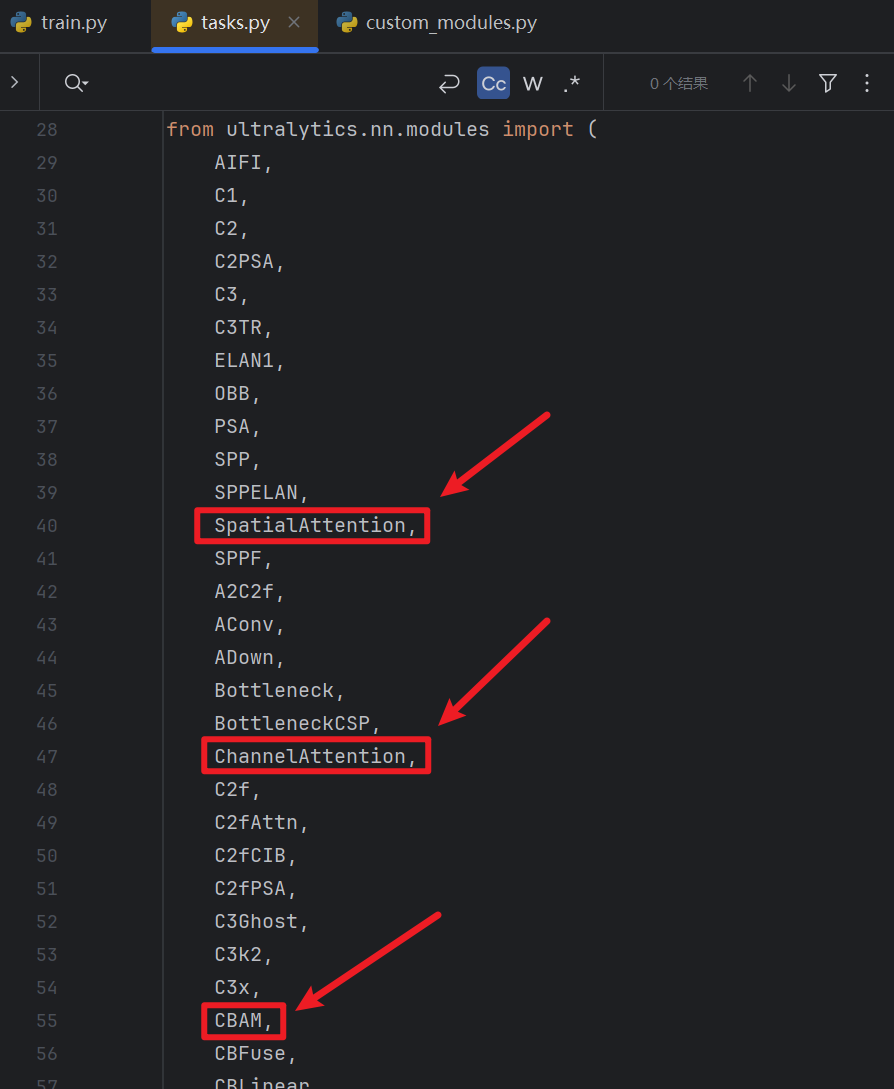

- 如果有CBAM模块,那么我们继续在ultralytics的 nn目录下找到【task.py】文件

- 然后在代码前面 from ultralytics.nn.modules import (......)里面加上【CBAM】,这样task.py才可以用到ultralytics的 nn / models / conv.py的CBAM模块

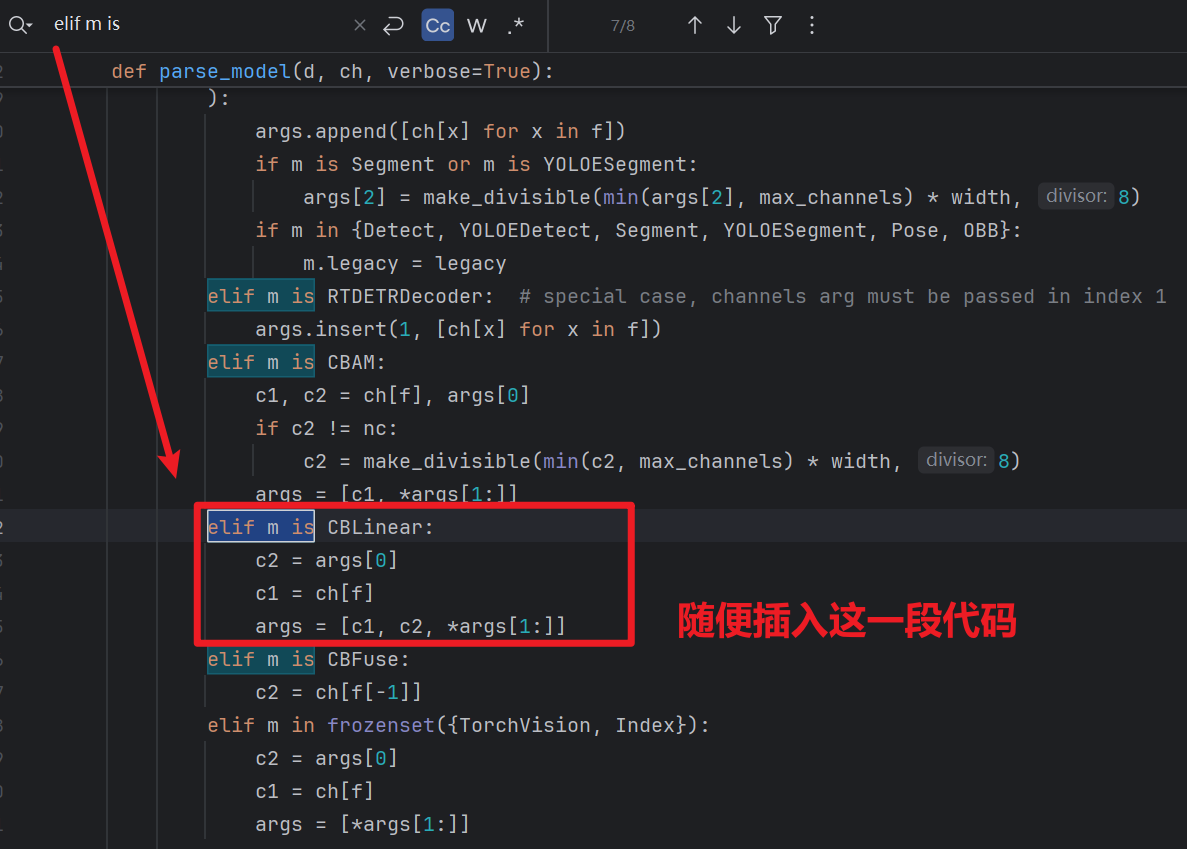

- 然后还是在task.py,【Ctrl + F】查找一下 “elif m is”就能找到下图这么一块地方

- 然后插入一段代码,一定要补上不然会报错!!!:

elif m is CBLinear: c2 = args[0] c1 = ch[f] args = [c1, c2, *args[1:]]

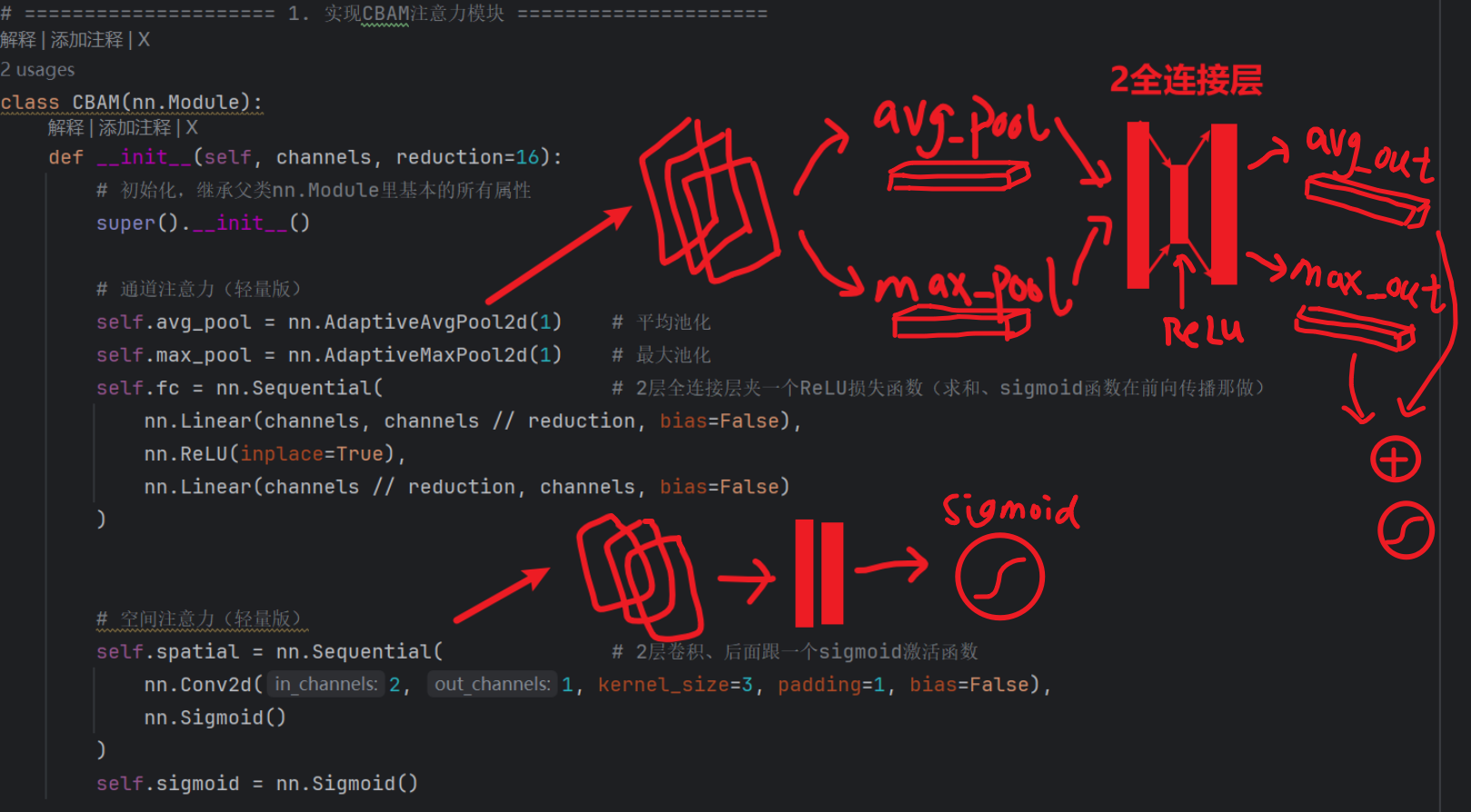

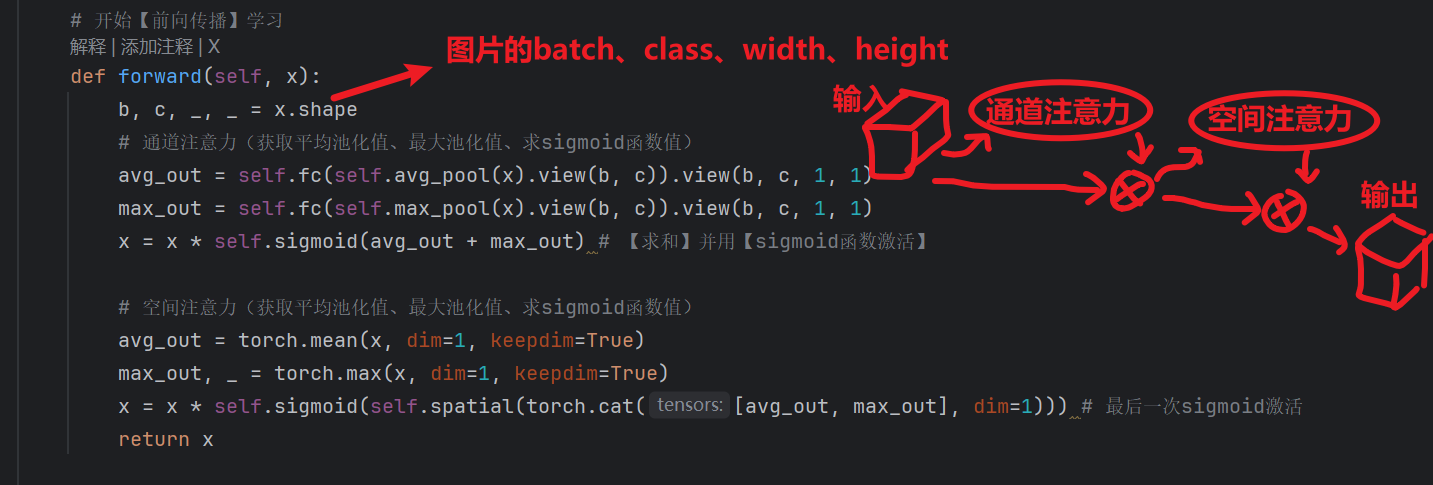

【自定义手写CBAM模块(想懂原理可以试试)】

有的ultralytics包可能特殊点,conv.py里没有CBAM这玩意,那就只能我们自己手写了

回到上面我给的CBAM结构图,只要记住了CBAM的结构,就可以创建一个CBAM模块了(就是背 “前人” 定义好的结构就行):

- 1、通道注意力:

- 平均池化 + 最大池化 + 2个全连接层夹1个损失函数

- 2、空间注意力:

- 1个卷积 + sigmoid激活函数

- 最后,在【train.py】代码注入CBAM

- 因为我们写的自定义CBAM模块不在ultralytics源码里,我们要加载它,就需要先在运行train.py的时候,把它注入task.py里

(具体代码我会放在在最后,可以直接复制拿去直接用)

然后自定义代码模块注入记得做,前面SE模块写过了。。。。。

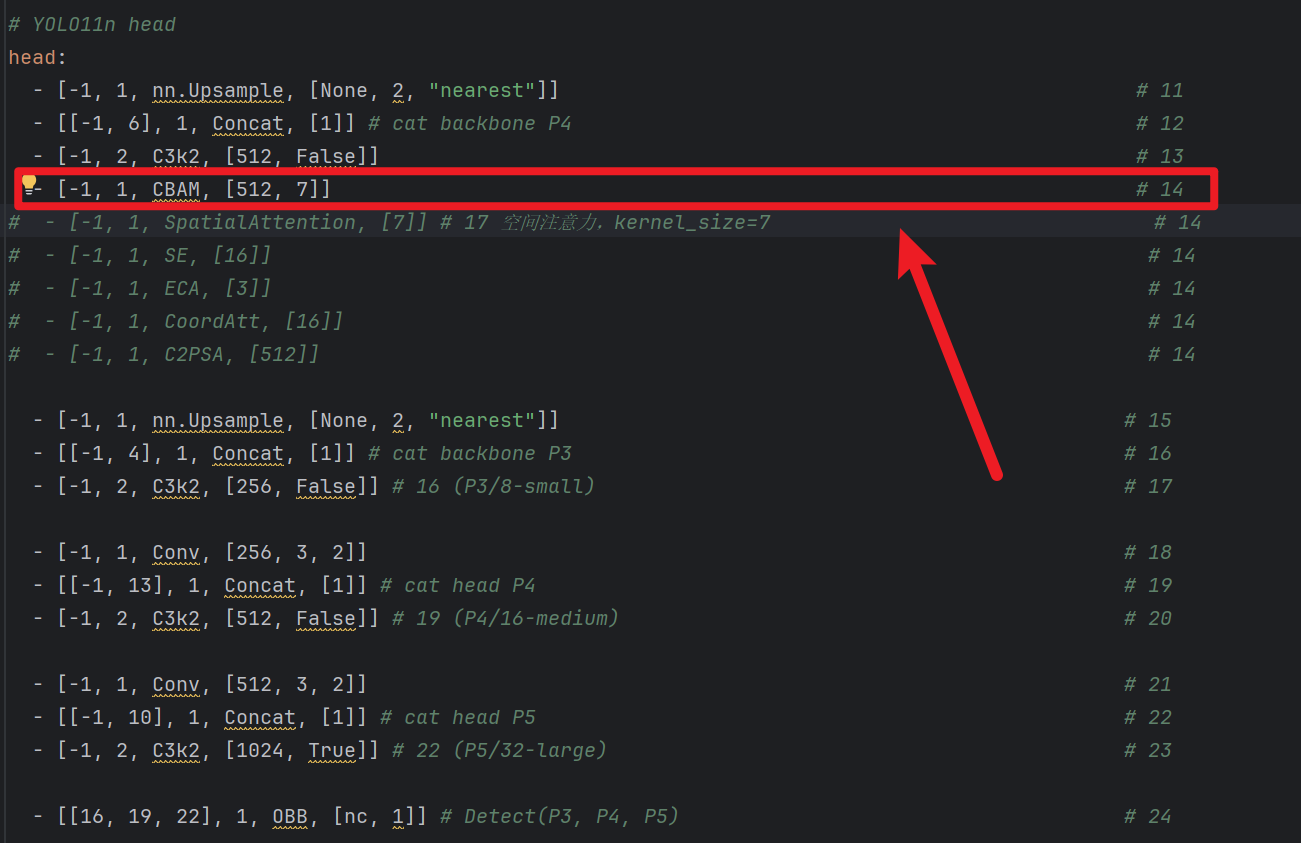

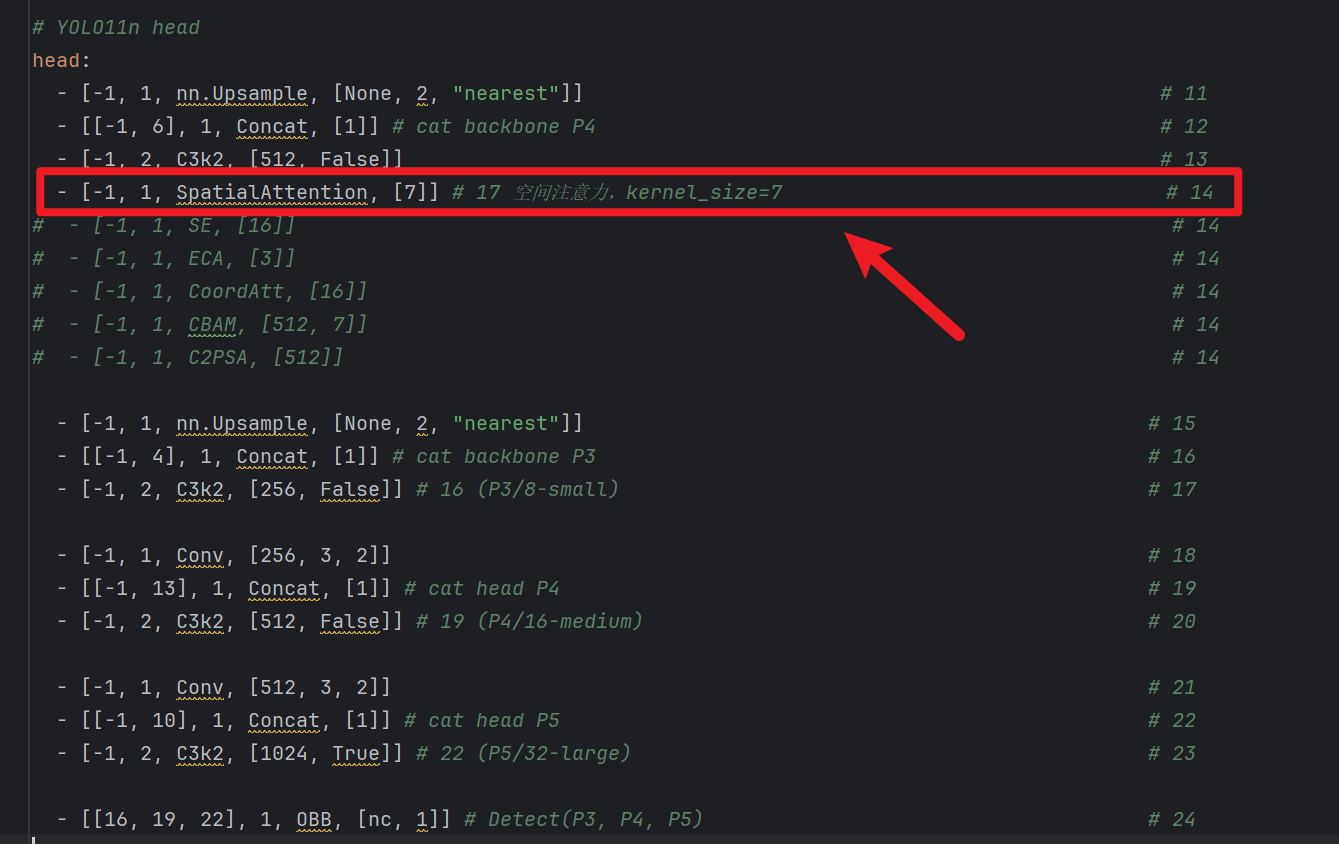

2)yaml网络结构插入

提醒:【注意力模块】不改变通道数!!!!!!!

那么我们应该插到哪?怎么插呢?————根【SE】一样!

- 1、插入到【捕捉最大尺寸】检测头的【上采样 + concat + C3k2】的【前面】

- 比如:你当前是3个检测层,那就插入到P3的【上采样 + concat + C3k2】的【前面】(就是第14层)

- SpatialAttention和CBAM同理

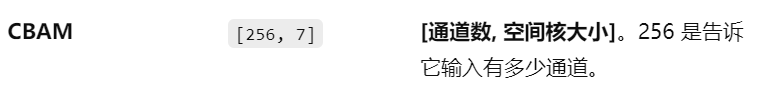

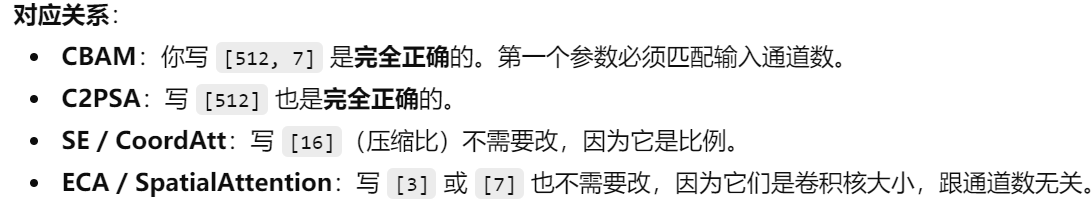

- 2、后面的参数怎么填

- CBAM后面参数:[? , ?]

- 第一个代表【通道数】,那么上一行是多少他就照抄就行,因为【注意力模块】不改变通道数!!

- 第二个代表【卷积核数量】,一般默认7

- SpatialAttention后面参数[?]

- 代表【卷积核数量】,一般默认7

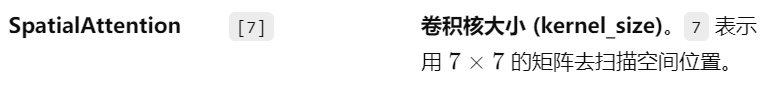

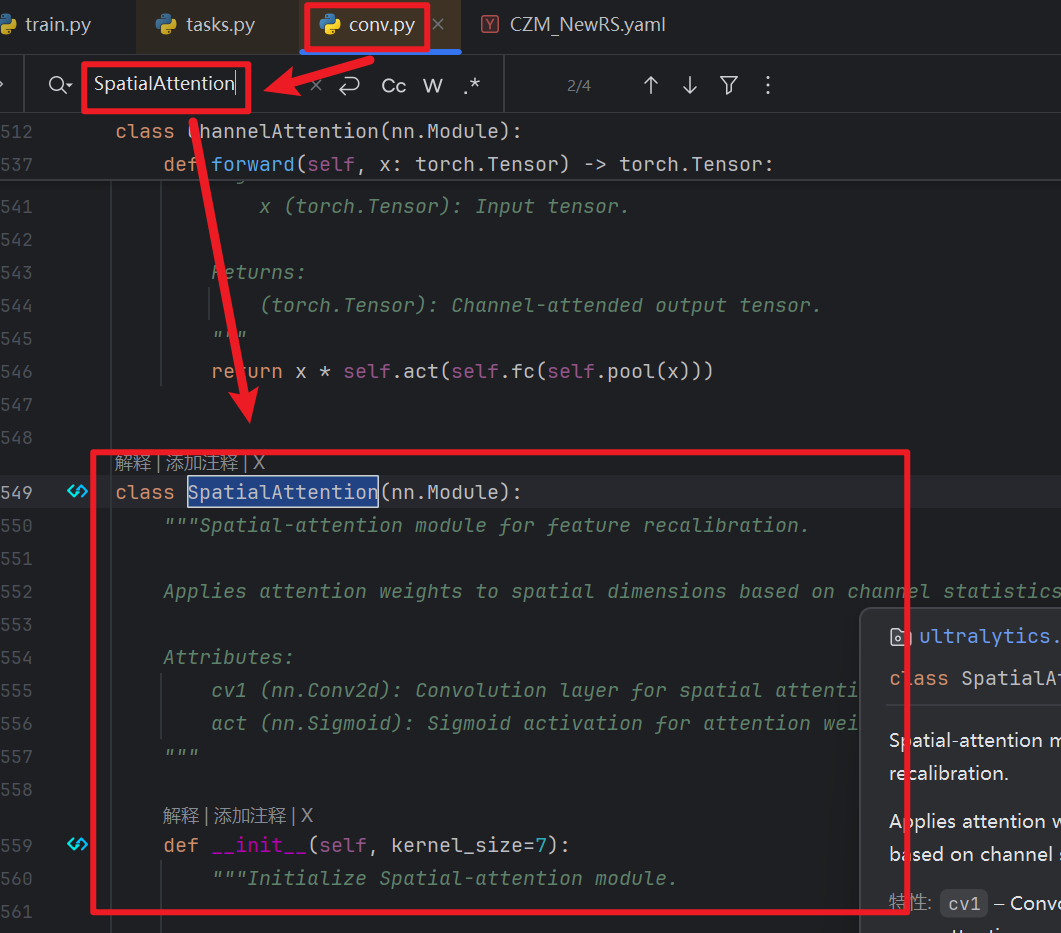

3)拓展:单独拆出【通道注意力】和【空间注意力】

这里我当时咨询chatGPT的时候,我说的是想要单独加强【空间定位】的注意力,他一开始是把【空间注意力:SpatialAttention】拆出来了来训练的,所以我单独研究了一下,CBAM的【通道注意力】和【空间注意力】是可以单独分为两个注意力模块使用的

【使用方法】:

- 1、yolo官方在【conv.py】写了这两个注意力模块的

- 2、那么直接像CBAM一样插入【task.py】就行了,只需要在开头导入这一步就够了

- 然后如果要手动自己写代码,结构上会有少许区别:

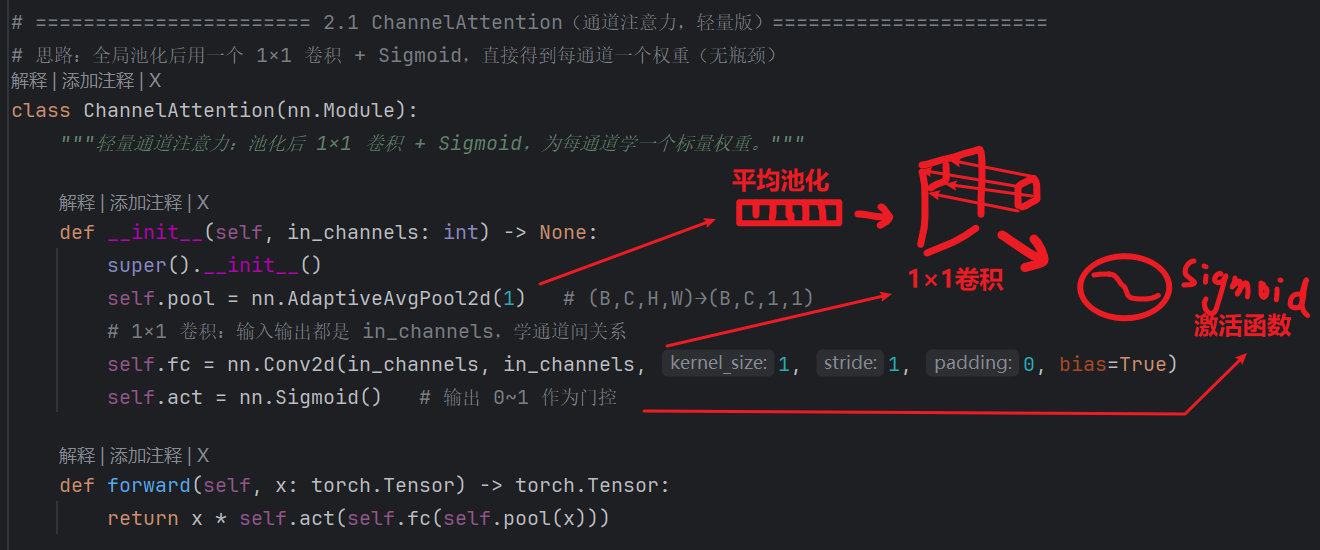

- 【通道注意力ChannelAttention】:

- CBAM的通道注意力部分采用标准的论文结构:双路池化 + 两层全连接层 + 相加 + Sigmoid

- 而YOLO单独拆出来的ChannelAttention是 “轻量版”:单路池化 + 一层卷积 + Sigmoid

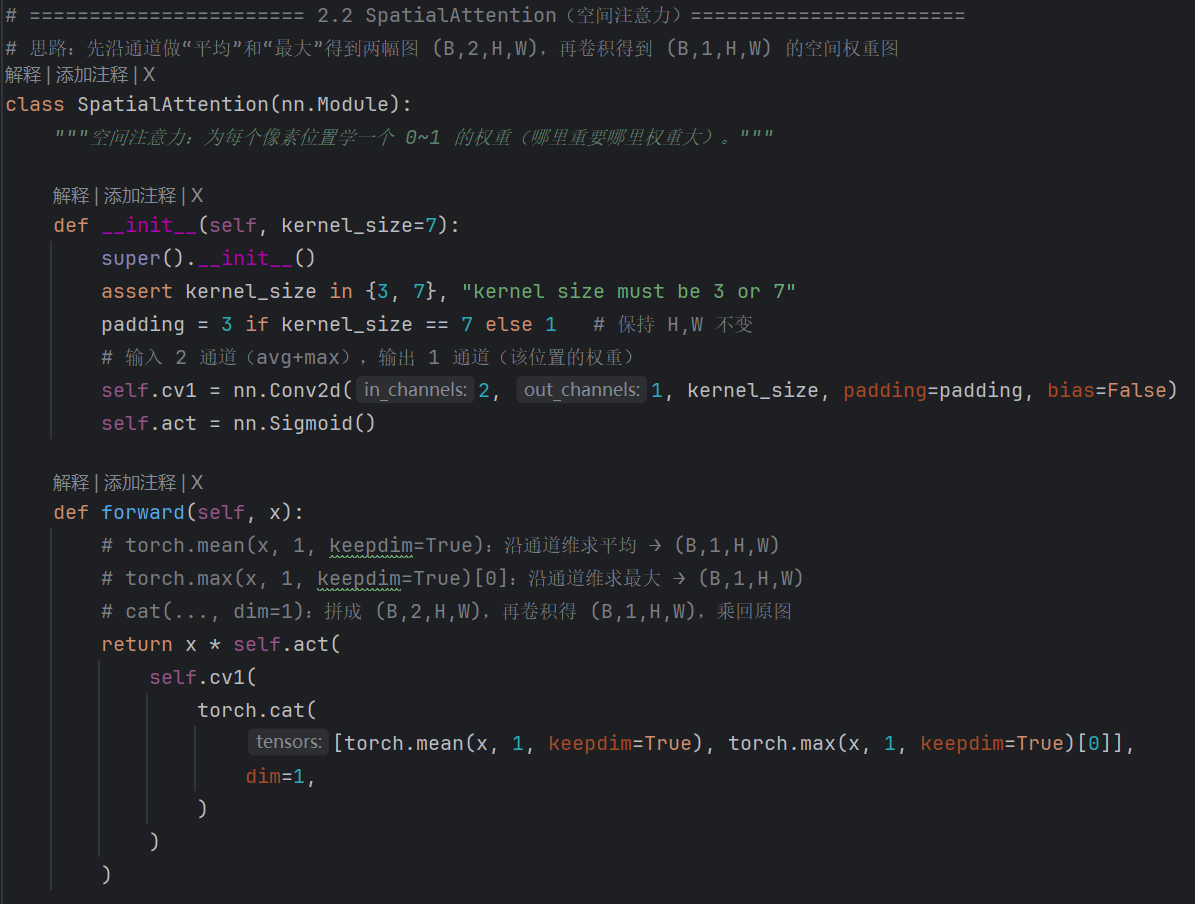

- 【空间注意力SpatialAttention】:

- 结构上倒是没有太大区别,只不过写法有点区别

- 但是这里我没有仔细介绍是因为,前面说过,不管是单独使用【通道注意力ChannelAttention】、还是单独使用【空间注意力SpatialAttention】,效果都并没有明显变好!!!!只有像CBAM那样【先“ChannelAttention” 再“SpatialAttention”】的注意力效果才有用!!!!

3、ECA模块

1)导入【ECA】模块

YOLO依旧没有这块代码,需要我们自己手写:

- 1、先在自定义模块脚本写他的代码:

- 解析模块有点烦了,各位自己根据图片和注释自己看吧

2、【注意:一定要做!!!!】

- 和SE一样,自定义模块还需要从【train.py】前面注入:

- 对应task.py那,你注册的当前train.py路径为全局环境变量“XXX”,然后task.py才能获取XXX的时候读到train.py路径

import sys import os from pathlib import Path # 必须最先做:把项目根加入 path,后面 from utils.custom_modules 才能找到 # 前面的 _ 不是语法要求,只是命名习惯,用来表达“内部用 / 不想被当公开接口”的意思 _你设置的全局环境变量 = Path(__file__).resolve().parent if str(_你设置的全局环境变量) not in sys.path: sys.path.insert(0, str(_你设置的全局环境变量)) # 让 DDP 子进程继承到 os.environ["你设置的全局环境变量"] = str(_你设置的全局环境变量)- task.py能读到train.py后,再把我们自定义的模块注入

# 让 yaml 里能使用自定义的 SE、ECA、CoordAtt(必须在 import YOLO 之前注入到 tasks) import ultralytics.nn.tasks as _ultra_tasks from utils.custom_modules import SE, ECA, CoordAtt _ultra_tasks.SE = SE _ultra_tasks.ECA = ECA _ultra_tasks.CoordAtt = CoordAtt

2)yaml网络结构插入

提醒:【注意力模块】不改变通道数!!!!!!!

那么我们应该插到哪?怎么插呢?

- 还是跟上面模块原理一样

- 后面的参数怎么填

- ECA后面参数:[3]

- 代表【1D局部卷积核数量】,一般默认3

4、Coordinate Attention模块

1)导入【ECA】模块

YOLO依旧没有这块代码,需要我们自己手写:

- 1、先在自定义模块脚本写他的代码:

- 解析模块有点烦了,各位自己根据图片和注释自己看吧

2、【注意:一定要做!!!!】

- 和SE一样,自定义模块还需要从【train.py】前面注入:

- 对应task.py那,你注册的当前train.py路径为全局环境变量“XXX”,然后task.py才能获取XXX的时候读到train.py路径

import sys import os from pathlib import Path # 必须最先做:把项目根加入 path,后面 from utils.custom_modules 才能找到 # 前面的 _ 不是语法要求,只是命名习惯,用来表达“内部用 / 不想被当公开接口”的意思 _你设置的全局环境变量 = Path(__file__).resolve().parent if str(_你设置的全局环境变量) not in sys.path: sys.path.insert(0, str(_你设置的全局环境变量)) # 让 DDP 子进程继承到 os.environ["你设置的全局环境变量"] = str(_你设置的全局环境变量)- task.py能读到train.py后,再把我们自定义的模块注入

# 让 yaml 里能使用自定义的 SE、ECA、CoordAtt(必须在 import YOLO 之前注入到 tasks) import ultralytics.nn.tasks as _ultra_tasks from utils.custom_modules import SE, ECA, CoordAtt _ultra_tasks.SE = SE _ultra_tasks.ECA = ECA _ultra_tasks.CoordAtt = CoordAtt

2)yaml网络结构插入

提醒:【注意力模块】不改变通道数!!!!!!!

那么我们应该插到哪?怎么插呢?

- 还是跟上面模块原理一样

- 后面的参数怎么填

- ECA后面参数:[16]

- 代表的是【压缩比:reduction=16】,不用管为什么,这就是默认的

总结

关于注入模块

- 目前yolo11只有ChannelAttention、SptailAttention、CBAM这三个注意力模块可以直接加入到task.py使用

- 其他的注意力模块需要我们手动编写,并在train.py开头注入

关于【yaml】部分插入

- 1、位置你就记着要么插14行、要么17行,就这么简单就完事了

- 2、参数部分:

- 要填【通道数】得模块直接抄上一行的、

- 压缩比例reduction固定16、

- 1d卷积固定3、

- 2d卷积固定7就行了

四、完整代码:

1、我的train.py代码:

import sys import os from pathlib import Path # 必须最先做:把项目根加入 path,后面 from utils.custom_modules 才能找到 # 前面的 _ 不是语法要求,只是命名习惯,用来表达“内部用 / 不想被当公开接口”的意思 _MyProject_ROOT = Path(__file__).resolve().parent if str(_MyProject_ROOT) not in sys.path: sys.path.insert(0, str(_MyProject_ROOT)) # 让 DDP 子进程继承到 os.environ["MyProject_ROOT"] = str(_MyProject_ROOT) import torch from ultralytics import YOLO # 让 yaml 里能使用自定义的 SE、ECA、CoordAtt(必须在 import YOLO 之前注入到 tasks) import ultralytics.nn.tasks as _ultra_tasks from utils.custom_modules import SE, ECA, CoordAtt _ultra_tasks.SE = SE _ultra_tasks.ECA = ECA _ultra_tasks.CoordAtt = CoordAtt # ⚠️ 注意:AutoDL是Linux环境,路径要改!把数据上传到 /root/autodl-tmp/ 下(强烈建议放这里,读写快) # 我们的数据集yaml DATA_YAML_PATH = "F:\我自己的毕设\YOLO_study\CZM_NewRS\CZM_NewRS.yaml" # 我们自定义的网络模型yaml MODEL_YAML = "my_yaml/11/my_yolo11-obb.yaml" # 我们的load加载的模型权重 MODEL_PATH = "yolo11n-obb.pt" if __name__ == '__main__': # Linux下通常不需要 freeze_support,但留着也不报错 torch.multiprocessing.freeze_support() # 加载模型,建议load换成 s (Small) 或 m (Medium) 模型,AutoDL显卡完全跑得动,精度比n高很多 model = YOLO(MODEL_YAML).load(MODEL_PATH) results = model.train( data=DATA_YAML_PATH, epochs=100, # 多跑一点,100轮对于从头练可能刚收敛 patience=60, # 60轮不提升就停,省点钱 imgsz=1240, # 1280也可以,但1024是标准倍数,训练更稳 # 【速度拉满配置】 device=0, # ❗关键:开启双卡并行训练! batch=-1, # 如果设batch=16,则双卡合计32;设-1自动适配 workers=6, # 有40核CPU,大胆给!16-24都可以,数据加载飞快 # cache="ram", # ❗有180G内存,直接把数据全读进RAM,速度起飞! amp=True, # 混合精度训练,速度快显存占用少 # 【增强参数微调:适当即可,拒绝卡通画】 augment=True, hsv_h=0.015, # 色相微调,保持不变 hsv_s=0.3, # ❗降下来!原0.7太高,导致色彩过饱和像动画片 hsv_v=0.3, # ❗降下来!原0.4太高,导致对比度太强 # 【几何增强】 degrees=5.0, # 稍微给点旋转,OBB任务很需要 translate=0.05, # 稍微平移一下 scale=0.5, # 尺度缩放 perspective=0.0, # ✅ 必须0!透视太大,图片都变形了,0.0001都算大的 # 【Mosaic 策略】 mosaic=0.2, # 开启马赛克增强 mixup=0, # 给一点点混合,不要太多 copy_paste=0.0, # ✅ 先关掉(后面再开) close_mosaic=10, # ❗最后10轮关闭Mosaic即可,50轮太早了,浪费了增强效果 # 📉【损失函数权重】提高定位相关权重,不降分类(老师:定位还不够好) # 默认:box=7.5, cls=0.5, dfl=1.5。OBB 的 angle 已含在 box loss 里,无单独权重 box=10.0, # 提高边界框/定位损失权重(默认 7.5) dfl=2.0, # 提高 DFL 损失权重,利于框边更精细(默认 1.5) cls=0.5, # 分类权重保持不变,不降低 # 📉【优化器】 optimizer='SGD', # 这种大数据集,SGD通常比AdamW后期泛化更好 lr0=0.01, lrf=0.01, cos_lr=True, # 余弦退火学习率,训练更丝滑 )

2、我的yolo11.yaml代码

3层检测目标层情况(仅head部分)

# YOLO11n head head: - [-1, 1, nn.Upsample, [None, 2, "nearest"]] # 11 - [[-1, 6], 1, Concat, [1]] # cat backbone P4 # 12 - [-1, 2, C3k2, [512, False]] # 13 - [-1, 1, ECA, [3]] # 14 # - [-1, 1, CBAM, [512, 7]] # 14 # - [-1, 1, SpatialAttention, [7]] # 17 空间注意力,kernel_size=7 # 14 # - [-1, 1, SE, [16]] # 14 # - [-1, 1, CoordAtt, [16]] # 14 - [-1, 1, C2PSA, [512]] # 14 - [-1, 1, nn.Upsample, [None, 2, "nearest"]] # 15 - [[-1, 4], 1, Concat, [1]] # cat backbone P3 # 16 - [-1, 2, C3k2, [256, False]] # 16 (P3/8-small) # 17 - [-1, 1, Conv, [256, 3, 2]] # 18 - [[-1, 13], 1, Concat, [1]] # cat head P4 # 19 - [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium) # 20 - [-1, 1, Conv, [512, 3, 2]] # 21 - [[-1, 10], 1, Concat, [1]] # cat head P5 # 22 - [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large) # 23 - [[16, 19, 22], 1, OBB, [nc, 1]] # Detect(P3, P4, P5) # 244层检测目标层情况(仅head部分)

# YOLO11n head head: - [-1, 1, nn.Upsample, [None, 2, "nearest"]] # 11 - [[-1, 6], 1, Concat, [1]] # cat backbone P4 # 12 - [-1, 2, C3k2, [512, False]] # 13 - [-1, 1, nn.Upsample, [None, 2, "nearest"]] # 14 - [[-1, 4], 1, Concat, [1]] # cat backbone P3 # 15 - [-1, 3, C3k2, [256, False]] # 16 (P3/8-small) # 16 - [-1, 1, SE, [16]] # 17 # - [-1, 1, SpatialAttention, [7]] # 17 空间注意力,kernel_size=7 # 17 # - [-1, 1, CBAM, [256, 7]] # 17 # - [-1, 1, C2PSA, [256]] # 17 # - [-1, 1, ECA, [3]] # 17 - [-1, 1, CoordAtt, [16]] # 17 - [-1, 1, nn.Upsample, [None, 2, "nearest"]] # 18 - [[-1, 2], 1, Concat, [1]] # cat backbone P2 (layer 2 = C3k2 输出) # 19 - [-1, 3, C3k2, [256, False]] # 20 (P2/4-small) # 20 - [-1, 1, Conv, [256, 3, 2]] # 21 - [[-1, 16], 1, Concat, [1]] # cat head P3 # 22 - [-1, 2, C3k2, [512, False]] # 23 (P3/8-medium) # 23 - [-1, 1, Conv, [256, 3, 2]] # 24 - [[-1, 13], 1, Concat, [1]] # cat head P4 # 25 - [-1, 2, C3k2, [512, False]] # 26 (P4/16-medium) # 26 - [-1, 1, Conv, [512, 3, 2]] # 27 - [[-1, 10], 1, Concat, [1]] # cat head P5 # 28 - [-1, 2, C3k2, [1024, True]] # 29 (P5/32-large) # 29 - [[16, 20, 23, 26, 29], 1, OBB, [nc, 1]] # Detect(P3,P2,P3-med,P4,P5),插入 SA 后索引+1 # 30

3、我的自定义注意力模块代码(包含所有注意力模块)

""" 自定义注意力模块(SE / ECA / CoordAtt / Channel / Spatial / CBAM) 用于 YOLO 等检测网络的 backbone 或 head,增强通道或空间上的重要特征。 【常用名词速查】 - in_channels:输入通道数(本文件已统一用此名)。c_ / mid_channels:中间压缩后的通道数(如 in_channels//r),不是输入 - // :整除(向下取整),例如 256//16=16,用来做“压缩比” - self.fc / self.gate:可学习的子网络;gate 一般指“门控”,输出 0~1 的权重 - squeeze(dim):去掉大小为 1 的维度;transpose:交换维度顺序;view:拉成指定形状 【若自己按逻辑写】 - SEnet:先全局平均池化得到每通道一个数 → 两层“全连接”(降维再升维)→ Sigmoid 得到权重 → 乘回原图。 - ChannelAttention:池化后一个 1×1 卷积 + Sigmoid,直接得到每通道权重(无瓶颈)。 - SpatialAttention:沿通道做 mean 和 max 得到两幅 (1,H,W),拼成 (2,H,W) → 卷积得到 (1,H,W) 权重图 → 乘回原图。 【nn.Conv2d 参数速查】 nn.Conv2d(输入通道, 输出通道, kernel_size, stride=1, padding=0, bias=...) - 第1个参数:输入通道数(输入特征图有几层“通道”)。 - 第2个参数:输出通道数(卷积后得到几层通道)。 - 第3个参数:卷积核大小(如 1 表示 1×1,只做通道混合、不改变 H,W;3 或 7 表示 3×3、7×7)。 - stride:步长,默认 1(不缩小尺寸)。 - padding:四周补零圈数,通常取 (kernel_size-1)//2 使 H、W 不变。 - bias:是否加偏置,True/False。 """ # ---------- 下面这些你可能会碰到的写法简要说明 ---------- # a // b :整除,结果向下取整。如 7//2=3,256//16=16。用来算“压缩后通道数”。 # self.fc :通常指全连接/线性层(或 1×1 卷积),这里用来学“通道权重”或做降维。 # self.gate :门控,一般接 Sigmoid,输出 (0,1),表示“保留多少”。 # .squeeze(-1):去掉最后一维且大小为 1 的维度;(B,C,1,1) → (B,C,1)。 # .transpose(1,2):交换第 1、2 维;(B,C,1) → (B,1,C),方便 Conv1d 在 C 维上卷积。 # .view(b,c) :把张量拉成 (b,c) 形状,不改变元素总数;常用于池化后喂给 Linear。 # .permute(0,1,3,2):按给定顺序重排维度,这里相当于把 dim=2 和 dim=3 互换。 import torch import torch.nn as nn # ============================ 1. SE(Squeeze-and-Excitation)============================ # 思路:对每个通道做“全局池化 → 两层全连接 → 得到该通道的权重”再乘回原特征 class SE(nn.Module): """Squeeze-and-Excitation:通道注意力,为每个通道学一个 0~1 的权重。""" # r 是“压缩比”(reduction ratio),用来算中间层的通道数 def __init__(self, in_channels, r=16): super().__init__() # in_channels // r:中间层通道数(压缩比)。 # max(1, ...) 第一个参数1,是为了防止r过大时mid_channels变成 0,至少也得是1,参数里取最大 mid_channels = max(1, in_channels // r) self.pool = nn.AdaptiveAvgPool2d(1) # 全局平均池化:(B,C,H,W)→(B,C,1,1),每个通道一个数 self.fc1 = nn.Conv2d(in_channels, mid_channels, 1, bias=True) # 降维 in_channels → mid_channels self.act = nn.SiLU() # SiLU或ReLU都可以 self.fc2 = nn.Conv2d(mid_channels, in_channels, 1, bias=True) # 升回 mid_channels → in_channels self.gate = nn.Sigmoid() # 激活函数:把输出压到 (0,1) def forward(self, x): w = self.pool(x) # (B,C,H,W)→(B,C,1,1) w = self.fc2(self.act(self.fc1(w))) # 瓶颈结构:C → mid_channels → C return x * self.gate(w) # 逐通道缩放,重要通道权重大 # ======================= 2.1 ChannelAttention(通道注意力,轻量版)======================= # 思路:全局池化后用一个 1×1 卷积 + Sigmoid,直接得到每通道一个权重(无瓶颈) class ChannelAttention(nn.Module): """轻量通道注意力:池化后 1×1 卷积 + Sigmoid,为每通道学一个标量权重。""" def __init__(self, in_channels: int) -> None: super().__init__() self.pool = nn.AdaptiveAvgPool2d(1) # (B,C,H,W)→(B,C,1,1) # 1×1 卷积:输入输出都是 in_channels,学通道间关系 self.fc = nn.Conv2d(in_channels, in_channels, 1, 1, 0, bias=True) self.act = nn.Sigmoid() # 输出 0~1 作为门控 def forward(self, x: torch.Tensor) -> torch.Tensor: return x * self.act(self.fc(self.pool(x))) # ======================= 2.2 SpatialAttention(空间注意力)======================= # 思路:先沿通道做“平均”和“最大”得到两幅图 (B,2,H,W),再卷积得到 (B,1,H,W) 的空间权重图 class SpatialAttention(nn.Module): """空间注意力:为每个像素位置学一个 0~1 的权重(哪里重要哪里权重大)。""" def __init__(self, kernel_size=7): super().__init__() assert kernel_size in {3, 7}, "kernel size must be 3 or 7" padding = 3 if kernel_size == 7 else 1 # 保持 H,W 不变 # 输入 2 通道(avg+max),输出 1 通道(该位置的权重) self.cv1 = nn.Conv2d(2, 1, kernel_size, padding=padding, bias=False) self.act = nn.Sigmoid() def forward(self, x): # torch.mean(x, 1, keepdim=True):沿通道维求平均 → (B,1,H,W) # torch.max(x, 1, keepdim=True)[0]:沿通道维求最大 → (B,1,H,W) # cat(..., dim=1):拼成 (B,2,H,W),再卷积得 (B,1,H,W),乘回原图 return x * self.act( self.cv1( torch.cat( [torch.mean(x, 1, keepdim=True), torch.max(x, 1, keepdim=True)[0]], dim=1, ) ) ) # ============================ 2.3 CBAM(通道+空间串联)============================ # 思路:先做通道注意力再做空间注意力,两段都用“avg+max → 小网络 → sigmoid”的形式 class CBAM(nn.Module): """CBAM = Channel + Spatial:先通道再空间,两段注意力串联。""" def __init__(self, in_channels, reduction=16, kernel_size=7): super().__init__() mid_channels = max(1, in_channels // reduction) # 通道注意力(轻量版) self.avg_pool = nn.AdaptiveAvgPool2d(1) # 平均池化,尺寸:[b,c,1,1] self.max_pool = nn.AdaptiveMaxPool2d(1) # 最大池化,尺寸:[b,c,1,1] self.fc = nn.Sequential( nn.Linear(in_channels, mid_channels, bias=False), nn.ReLU(inplace=True), nn.Linear(mid_channels, in_channels, bias=False), ) # 空间注意力(轻量版) self.spatial = nn.Sequential( # 2层卷积、后面跟一个sigmoid激活函数 nn.Conv2d(2, 1, kernel_size=3, padding=1, bias=False), nn.Sigmoid() ) self.sigmoid = nn.Sigmoid() # 开始【前向传播】学习 def forward(self, x): b, c, _, _ = x.shape # 通道注意力(获取平均池化值、最大池化值、求sigmoid函数值) # view(b, c):把 (B,C,1,1) 拉成 (B,C) 才能喂给 Linear avg_out = self.fc(self.avg_pool(x).view(b, c)).view(b, c, 1, 1) max_out = self.fc(self.max_pool(x).view(b, c)).view(b, c, 1, 1) x = x * self.sigmoid(avg_out + max_out) # 【求和】并用【sigmoid函数激活】 # 空间注意力(获取平均池化值、最大池化值、求sigmoid函数值) avg_out = torch.mean(x, dim=1, keepdim=True) max_out, _ = torch.max(x, dim=1, keepdim=True) x = x * self.sigmoid(self.spatial(torch.cat([avg_out, max_out], dim=1))) # 最后一次sigmoid激活 return x # ============================ 3. ECA(Efficient Channel Attention)============================ # 思路:不用全连接,用 1D 卷积在“通道维”上做局部交互,参数量小;需把 (B,C,1,1) 变成 (B,1,C) 才能用 Conv1d class ECA(nn.Module): """ECA:用一维卷积在通道维做局部建模,替代 SE 的全连接,更省参数。""" def __init__(self, in_channels, k=3): super().__init__() k = int(k) if k % 2 == 0: k += 1 # Conv1d 用奇数 kernel 方便两边 padding 相同 self.pool = nn.AdaptiveAvgPool2d(1) # (B,C,H,W)→(B,C,1,1) self.conv = nn.Conv1d(1, 1, kernel_size=k, padding=(k - 1) // 2, bias=False) self.gate = nn.Sigmoid() def forward(self, x): y = self.pool(x) # (B, C, 1, 1) # squeeze(-1):去掉最后一维 (B,C,1,1)→(B,C,1);transpose(1,2):1和2维互换→(B,1,C) # 这样 C 这一维变成 Conv1d 的“长度”,1 是通道维,才能用 nn.Conv1d(1,1,k) y = y.squeeze(-1).transpose(1, 2) # (B, 1, C) y = self.conv(y) # (B, 1, C) # 再变回 (B,C,1,1):transpose(1,2)→(B,C,1),unsqueeze(-1)→(B,C,1,1) y = self.gate(y).transpose(1, 2).unsqueeze(-1) return x * y # ============================ 4. CoordAtt(Coordinate Attention)============================ # 思路:沿 H、W 两个方向分别池化,得到“垂直方向”和“水平方向”的编码,再生成两路注意力图 a_h、a_w,乘回原图 class CoordAtt(nn.Module): """坐标注意力:沿高、宽方向分别池化并生成方向感知的注意力,适合长条/细长目标。""" def __init__(self, in_channels, r=16): super().__init__() mid_channels = max(8, in_channels // r) # (None, 1):高方向保留、宽压成 1 → (B,C,H,1);(1, None):宽保留、高压成 1 → (B,C,1,W) self.pool_h = nn.AdaptiveAvgPool2d((None, 1)) self.pool_w = nn.AdaptiveAvgPool2d((1, None)) self.conv1 = nn.Conv2d(in_channels, mid_channels, 1, bias=False) self.bn1 = nn.BatchNorm2d(mid_channels) self.act = nn.SiLU() self.conv_h = nn.Conv2d(mid_channels, in_channels, 1, bias=False) # 生成“高方向”权重 self.conv_w = nn.Conv2d(mid_channels, in_channels, 1, bias=False) # 生成“宽方向”权重 self.gate = nn.Sigmoid() def forward(self, x): b, c, h, w = x.shape x_h = self.pool_h(x) # (B, C, H, 1) # permute(0,1,3,2):把 (B,C,1,W) 变成 (B,C,W,1),方便后面和 x_h 在 dim=2 上 cat x_w = self.pool_w(x).permute(0, 1, 3, 2) # (B, C, W, 1) y = torch.cat([x_h, x_w], dim=2) # (B, C, H+W, 1) y = self.act(self.bn1(self.conv1(y))) y_h, y_w = torch.split(y, [h, w], dim=2) # 再拆回高、宽两段 y_w = y_w.permute(0, 1, 3, 2) # (B, mid_channels, 1, W) a_h = self.gate(self.conv_h(y_h)) # (B, C, H, 1) a_w = self.gate(self.conv_w(y_w)) # (B, C, 1, W) return x * a_h * a_w # 广播相乘,得到带高、宽注意力的特征

4、我的task.py代码